- Why IBM MQ belongs in AI and RAG architectures

- Designing AI ingestion on IBM MQ? Start with resilience

- What IBM MQ’s role is — and is not

- The reference architecture: MQ to RAG

- A concrete example: support tickets feeding a knowledge assistant

- Is the MQ consumer different in this pattern?

- Monitoring IBM MQ when it feeds AI and RAG

- Testing MQ-to-RAG pipelines

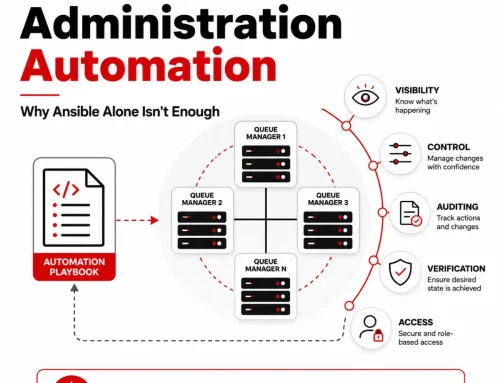

- Governance and team boundaries

- The operational takeaway

- FAQ

- TL;DR

- Endnotes

Using IBM MQ as a Reliable Ingestion Layer for AI and RAG

Enterprise AI projects rarely fail because the model was too hard to call. They fail because the right business data does not reach the right downstream system in a timely, reliable, and governable way.

That is why IBM MQ deserves a place in the AI conversation.

Not because IBM MQ is a vector database. Not because IBM MQ is a retrieval engine. Not because IBM MQ is the large language model. IBM MQ matters because many enterprises already use it to move high-value business events, records, updates, and requests between critical systems. IBM describes MQ as messaging middleware for the assured, secure, and reliable exchange of information between applications, systems, services, and files.1

That makes IBM MQ a strong fit for a very practical role in modern AI architectures: the ingestion and decoupling layer.

For organizations building retrieval-augmented generation, or RAG, that role is especially useful. IBM’s watsonx documentation describes RAG as a pattern in which a knowledge base is preprocessed into plain text and embeddings, stored in a vector index, and then searched at question time so the model can answer with grounded context.2 If the enterprise data that should feed that knowledge base already moves through IBM MQ, then MQ can become the dependable upstream path into the RAG pipeline.

In other words, the question is not whether IBM MQ “does AI.” The better question is this:

Can IBM MQ reliably feed the data that an AI or RAG application needs?

The answer is yes.

A particularly clear example is support operations. IBM’s RAG documentation explicitly lists customer support tickets in a content management system as a valid type of knowledge-base content for RAG.3 If support events, ticket updates, or case notes already pass through IBM MQ, then MQ can serve as the enterprise-grade path that feeds those records into a knowledge assistant.

That sounds straightforward, but it changes how teams should think about architecture, operations, monitoring, and testing. Once an MQ flow starts feeding a downstream AI pipeline, it is no longer enough to ask whether a message arrived. Teams also need to ask whether the message was transformed correctly, indexed correctly, retrievable later, and still flowing when dependencies outside MQ slow down or fail.

That is where architecture discipline matters. It is also where monitoring and testing become much more important than the AI hype cycle would suggest.

This article walks through that architecture, using a concrete example of support tickets on IBM MQ feeding a knowledge assistant. It then looks at what changes operationally, what should be monitored, and how to test these flows realistically before and after go-live.

Why IBM MQ belongs in AI and RAG architectures

Many enterprises already have the most valuable part of an AI project in place: the data path.

Business events, updates, approvals, status changes, service requests, ticket notes, and transaction messages often already move through IBM MQ. MQ is built to decouple producers and consumers, support reliable delivery, and simplify integration across heterogeneous systems.1 That makes it useful whenever an AI initiative depends on business data that originates in existing enterprise workflows.

RAG is one of the clearest examples.

A RAG system does not depend only on model quality. It depends on whether the right business content becomes part of the knowledge base in a form that can later be retrieved. IBM describes the RAG pattern as one in which source content is converted to plain text, vectorized, stored in a vector index, and then searched for relevant passages when a user asks a question.2 The better the incoming content and metadata, the better the downstream retrieval tends to be.

That means AI teams need an ingestion path that is stable, asynchronous, and already trusted by the business. IBM MQ can play exactly that role.

This is especially attractive in enterprises that do not want to force source applications to integrate directly with every downstream AI component. Instead of asking each producing system to talk directly to a vector pipeline, a model endpoint, or a search service, organizations can continue to put messages on MQ and let downstream services consume those messages on their own timelines.

That gives the business a cleaner separation of concerns:

- source applications publish business events

- IBM MQ handles transport and decoupling

- ingestion services prepare the content for AI use

- vector infrastructure handles retrieval

- the model handles answer generation

Architecturally, that is much more maintainable than collapsing everything into one oversized integration point.

Designing AI ingestion on IBM MQ? Start with resilience

Download Empowering Infrastructure Architects: Ensuring Resilience in Middleware Architectures for Modern Enterprises for practical guidance on building resilient middleware environments with real-time visibility, proactive monitoring, automation, and secure cross-team collaboration.

What IBM MQ’s role is — and is not

To use IBM MQ well in a RAG design, it helps to be very precise about its role.

IBM MQ is not the vector store.

IBM MQ is not the retriever.

IBM MQ is not the large language model.

IBM MQ is the messaging backbone that gets the right content from the right source systems to the right downstream processing services.1

That distinction matters because it prevents a lot of confusion in project planning.

A RAG system still needs a knowledge base. IBM’s documentation describes that knowledge base as content that is preprocessed, vectorized, and stored for retrieval.2 It still needs embeddings. IBM describes vector embeddings as numerical representations that help find passages most similar to a user’s question, and it notes that those vectors are stored in vector databases and retrieved with a vector index.4 It still needs retrieval logic, and often vector-store tuning, chunking decisions, and search settings.2 5

What MQ contributes is something different but equally important: a dependable path for moving business content into that downstream pipeline.

That is why the most useful phrase here is not “MQ for AI.” It is:

IBM MQ as a reliable ingestion layer for AI and RAG.

That phrasing is more accurate, more practical, and more useful for architects and engineers who actually have to build and run these systems.

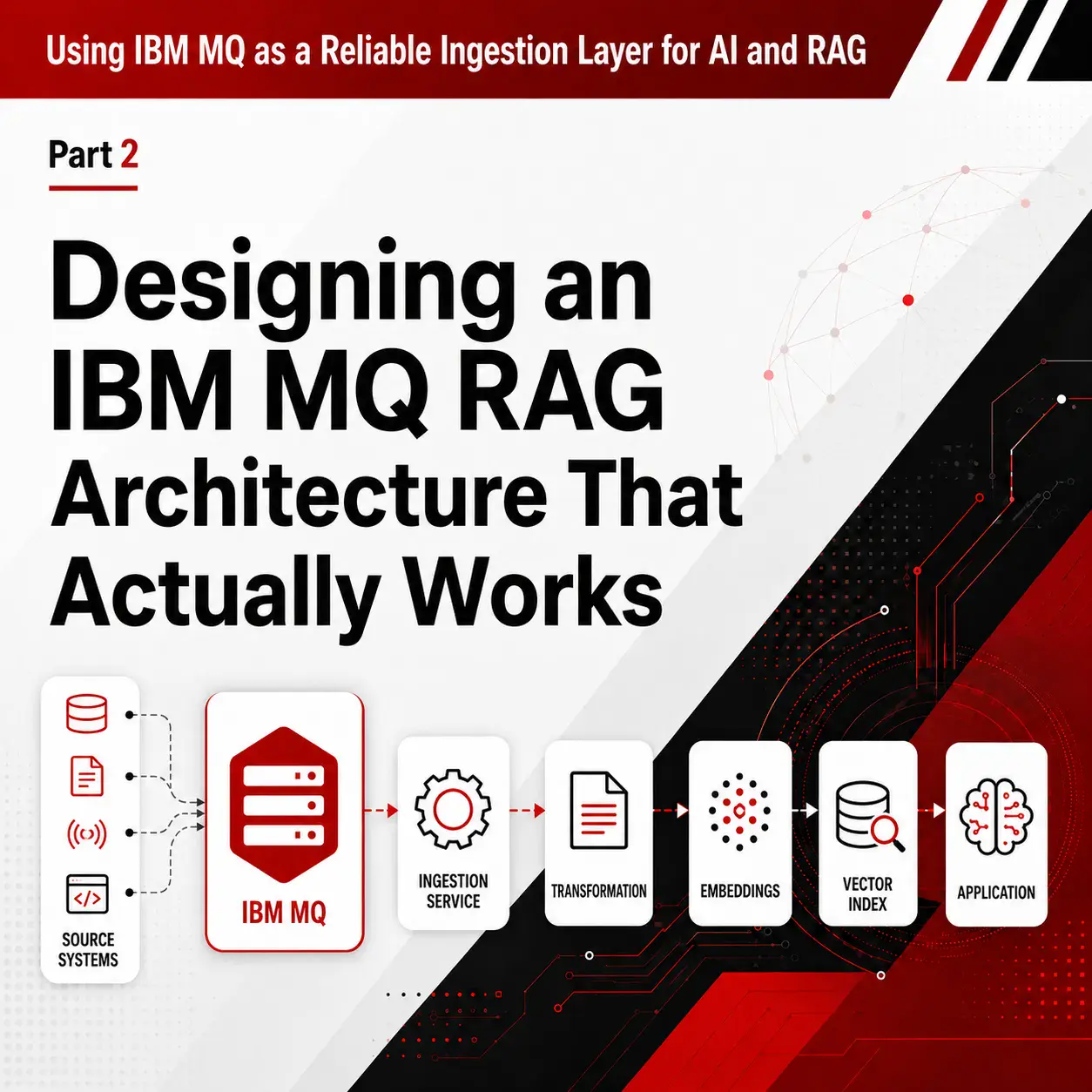

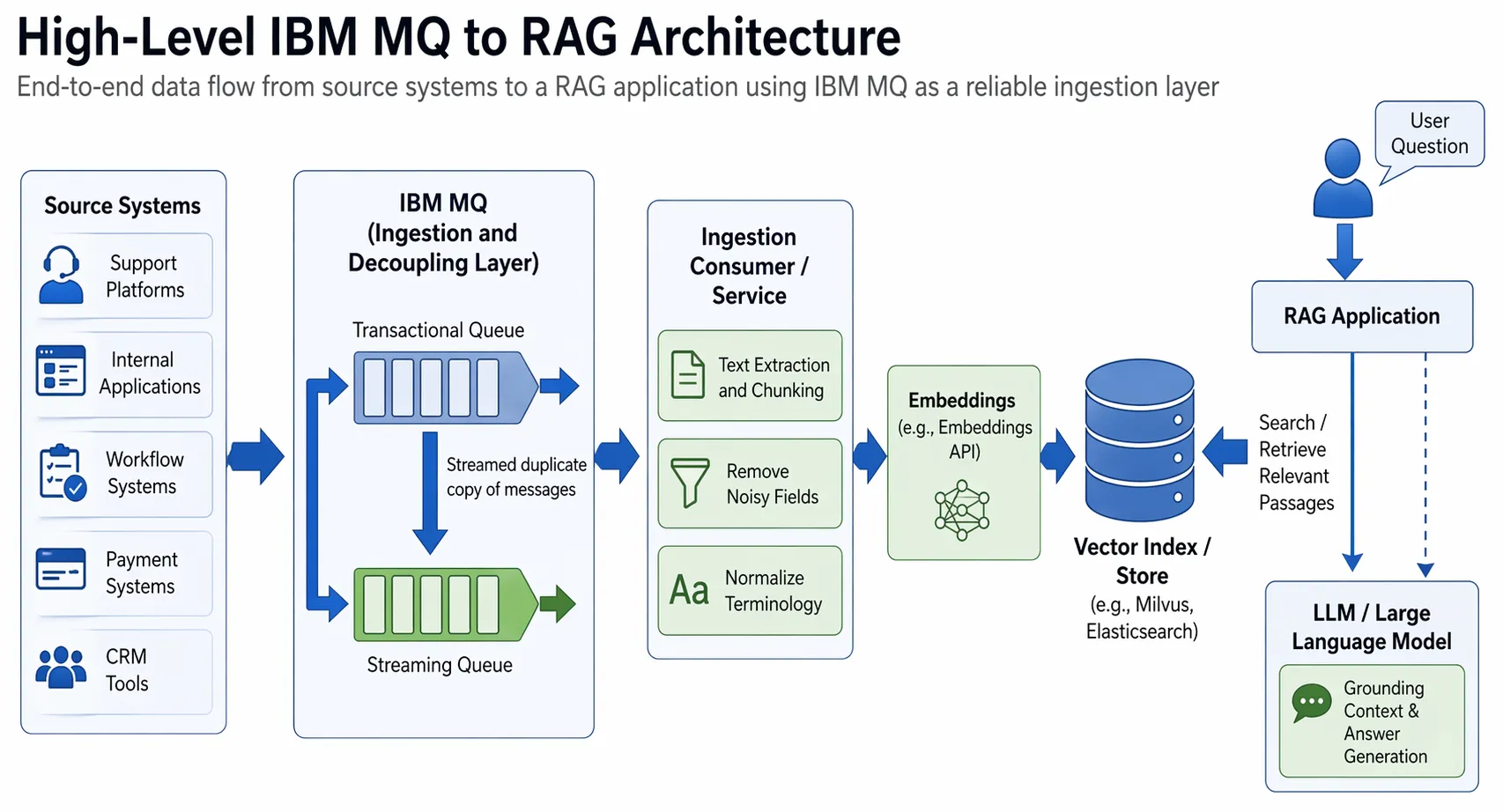

The reference architecture: MQ to RAG

A clean MQ-to-RAG architecture usually looks like this:

Source systems → IBM MQ → ingestion consumer → text extraction and chunking → embeddings → vector index → RAG application → model response

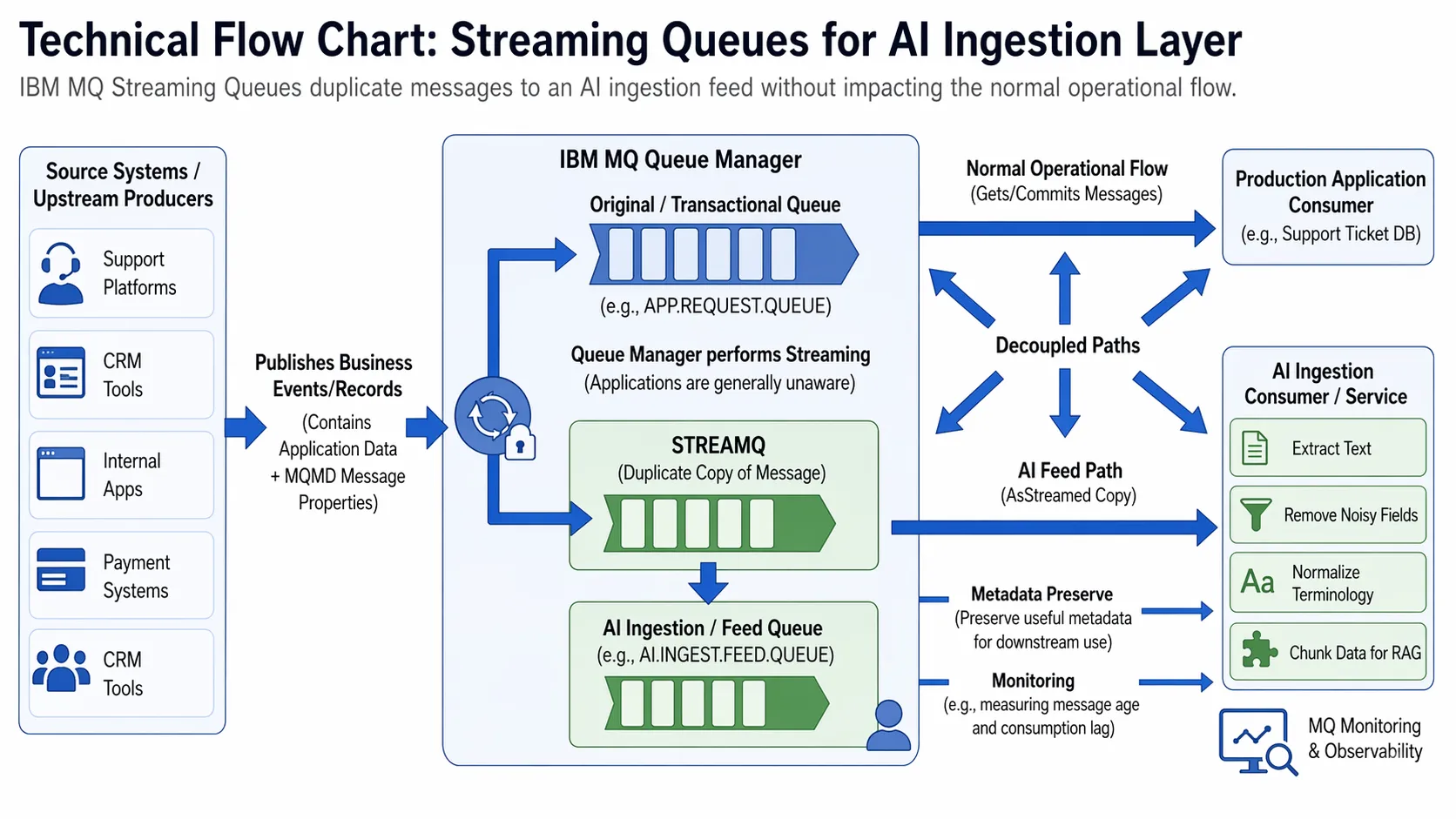

A high-level IBM MQ to RAG architecture, where IBM MQ acts as the reliable ingestion layer that feeds business content into an AI pipeline for extraction, chunking, embedding, indexing, retrieval, and grounded model responses.

Let’s unpack that.

1. Source systems publish business content to IBM MQ

The upstream producers might be support platforms, internal applications, workflow systems, payment systems, CRM tools, claims systems, or integration services. They publish events or records to IBM MQ because other downstream systems already depend on those messages.

IBM notes that an MQ message consists of application data plus message properties, and that the MQMD message descriptor contains control information that accompanies the application data between sender and receiver.6 7 That matters for RAG because the payload is only part of the story. Metadata such as message identifiers, timestamps, product names, environment markers, and correlation data can become valuable downstream indexing and retrieval attributes. But that only helps if the ingestion service maps those MQ-level fields deliberately into the downstream metadata model. In practice, fields such as message IDs, correlation IDs, put timestamps, source-system markers, and business properties should be carried forward into vector-store or retrieval-layer metadata so the content can later be filtered, traced, governed, and troubleshot effectively.

2. A dedicated ingestion consumer reads from an AI feed queue

The next step is usually not to let the AI pipeline compete with the primary transactional consumer on the same queue.

A better pattern is to give the AI side its own feed. IBM MQ’s streaming queues feature is especially useful here. IBM documents streaming queues as a way to create a duplicate copy of each message on a second queue, and it notes that the queue manager performs the streaming so that, in almost all cases, the applications putting to and getting from the original queue are unaware that streaming is taking place.8 9

That is a strong pattern for RAG because it lets teams feed the AI pipeline without disturbing the original operational flow. But architects should make an explicit choice about duplication behavior. If the AI feed is a non-critical side stream, best-effort duplication may be acceptable. If the downstream RAG or knowledge pipeline depends on complete ingestion of events, the design should not assume that any side-stream copy is automatically good enough. In that case, the duplication mode, downstream handling, and validation approach should be chosen to support completeness rather than convenience.

IBM MQ streaming queues can create a separate AI ingestion feed by duplicating messages from the operational flow, helping teams supply content to a RAG pipeline without changing or competing with the main transactional process.

3. The ingestion service converts messages into AI-ready content

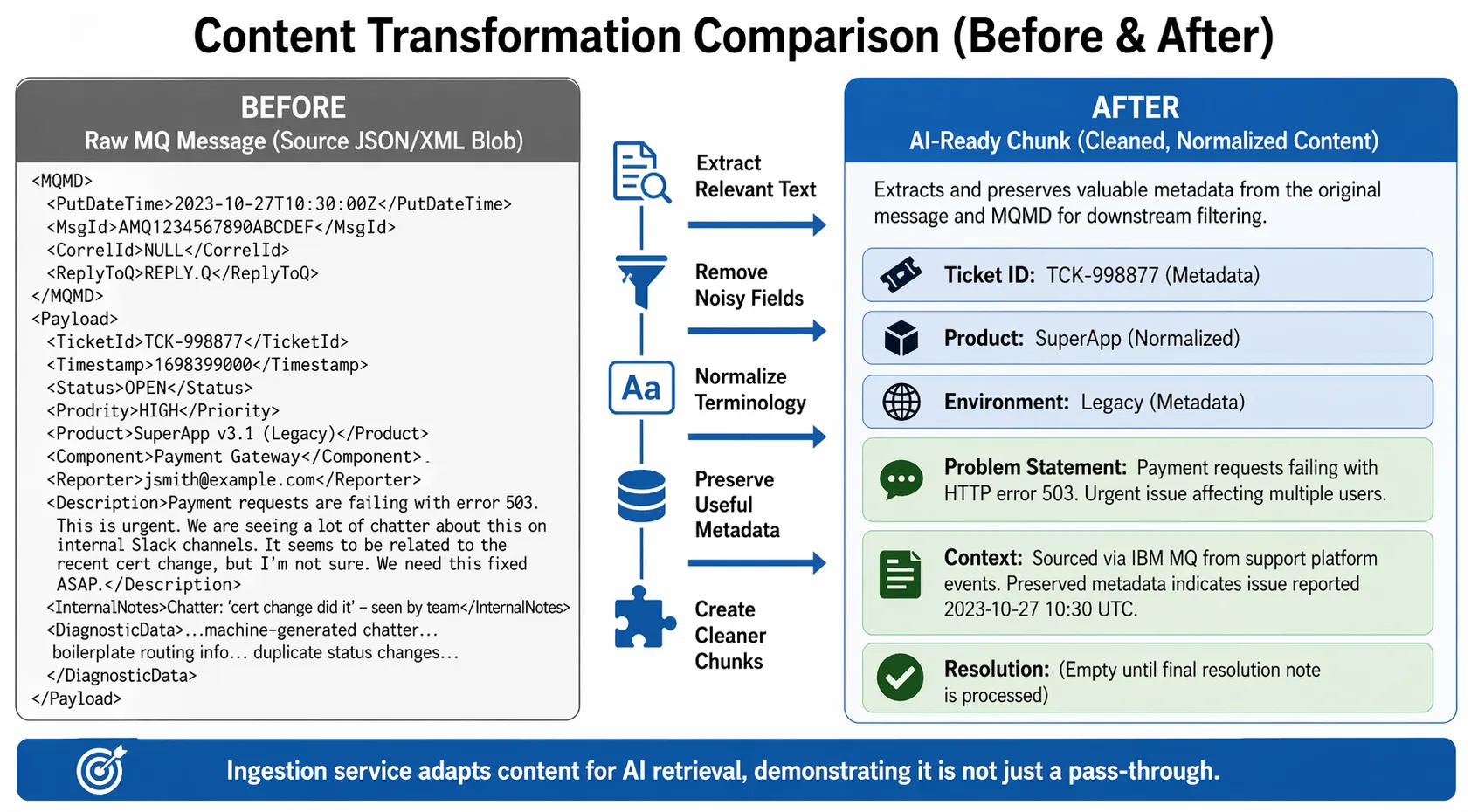

Raw MQ messages are rarely ideal grounding material as-is.

In a RAG pattern, IBM says the knowledge base is preprocessed to convert content to plain text and vectorize it, and that preprocessing can include text extraction from tables and images into text the model can interpret.2 In practice, that means the ingestion service usually needs to:

- extract the text that actually matters

- remove noisy fields

- normalize terminology

- preserve useful metadata

- split long records into chunks that will retrieve well later

IBM also recommends adapting knowledge-base content to make it more accessible to generative AI and improving the quality of AI responses through that adaptation.10 That advice applies directly here. A good MQ payload is not automatically a good RAG document.

Raw MQ messages often need to be refined before they are useful for RAG. This comparison shows how ingestion transforms operational message data into cleaner, structured content that can be embedded, indexed, retrieved, and used to ground model responses.

4. Embeddings are generated and stored in a vector index

IBM documents that vector embeddings encode the meaning of a sentence or passage numerically so relevant passages can be found later by similarity, and that those vectors are stored in vector databases and retrieved with a vector index.4

IBM also documents vector-store options including Milvus and Elasticsearch.11 12 The specific store can vary, but the architectural point stays the same: MQ delivers the content, and the vector layer makes that content retrievable later.

5. The RAG application retrieves and grounds answers

At question time, IBM describes the process this way: the question is converted to an embedding, relevant passages are found in the vector index, and those passages are passed to the model as contextual input.2 That is where the assistant actually becomes useful to end users.

Again, MQ is not doing the retrieval. MQ already did its job earlier by getting the content into the pipeline reliably.

Download Empowering Infrastructure Architects: Ensuring Resilience in Middleware Architectures for Modern Enterprises for practical guidance on resilience, visibility, and secure collaboration across complex middleware environments.

A concrete example: support tickets feeding a knowledge assistant

Now let’s make the pattern real.

Imagine a company whose support platform, case management system, or integration layer already publishes support-related events to IBM MQ. New ticket creation, status changes, escalations, engineer notes, and resolution updates all travel as messages between systems.

That organization wants to build an internal support assistant that can answer questions such as:

- Have we seen this issue before?

- What fixed similar incidents last quarter?

- Which known resolutions apply to this environment?

- What changed before this symptom started?

IBM explicitly identifies customer support tickets as a suitable content type for a RAG knowledge base.3 So this is not a stretch use case. It is a natural one.

Here is how the flow might work.

Step 1: Support events land on IBM MQ

A new ticket is created. The event is published to MQ with the case ID, timestamps, product family, severity, region, and problem description. Later updates add engineer notes, status changes, and a final resolution summary.

Because MQ messages can include both application data and message properties, the ingestion service does not have to guess where important context came from. But it should not just “preserve” that metadata in a general sense. It should explicitly map useful MQ and business fields into the metadata used by the downstream index and retrieval layer. In this example, that could include message ID, correlation ID, put timestamp, ticket ID, product, severity, region, and status, so retrieved results can later be filtered, traced back to source events, and governed more effectively.6 7

Step 2: A streamed copy feeds the AI ingestion queue

The main operational consumer still does whatever it was already doing with the message. At the same time, MQ streaming queues can create a duplicate message on an AI-specific queue.8 9

That means the support platform does not have to be rewritten to “know about” the knowledge assistant. The AI side becomes a downstream consumer of a purpose-built feed. But this is also where the architect should think carefully about completeness. If the knowledge assistant is expected to rely on a complete support history, the AI feed should not be treated as a casual best-effort side stream. The duplication behavior should be selected and validated based on the business importance of not missing ingestion events.

Step 3: The ingestion service prepares the ticket for retrieval

This is where many projects either get serious or get sloppy.

A raw support ticket often contains too much noise for good retrieval. Timestamps, boilerplate, internal routing notes, machine-generated chatter, or duplicate status changes can all reduce retrieval quality if ingested carelessly.

So the ingestion service typically creates cleaner chunks such as:

- problem statement

- environment details

- key symptoms

- diagnostic observations

- final resolution note

It also normalizes fields. Product names may have aliases. Error texts may vary slightly. Teams may use shorthand in one place and full terminology in another. IBM recommends adapting knowledge-base content so it is more accessible to the AI system and more likely to produce good responses.10

Step 4: The cleaned content is embedded and indexed

The assistant does not search the original MQ queue later. It searches the vectorized knowledge base built from the ticket content.2 4

That is the critical transition point from “message transport” to “AI retrieval.”

Step 5: The assistant answers grounded questionss

Later, a support engineer asks, “Why are payment requests timing out after a recent certificate change?”

The system embeds the question, retrieves similar prior tickets, finds a relevant resolution note, and passes that context to the model.2 The answer is then based on retrieved support knowledge rather than on model improvisation alone.

From the end user’s perspective, this looks like a smart support assistant.

From the architect’s perspective, it is a disciplined pipeline in which IBM MQ is the ingestion backbone.

Is the MQ consumer different in this pattern?

From MQ’s perspective, not fundamentally.

The AI ingestion service is still a consumer. It connects to MQ, opens the queue, gets messages, and handles transaction boundaries. IBM provides several ways to build these consumers, including IBM MQ classes for JMS and Jakarta Messaging, and IBM notes that its Java options support both Java-specific and standards-based messaging models.13

So the core MQ interaction remains familiar.

What changes is everything around the consumer.

First, the downstream work is usually more complex. After a message is retrieved, the service might parse the content, clean it, chunk it, call an embeddings API, write to a vector index, and possibly store additional metadata elsewhere.

Second, transaction design matters more. IBM MQ supports syncpoint coordination, commit, and backout behavior for recoverable operations.13 14 15 In a RAG flow, teams often need to decide exactly when the MQ unit of work should be committed relative to downstream indexing work. The MQ mechanics are standard; the design pressure is new because downstream dependencies are usually outside MQ’s own transactional boundary.

Third, idempotency matters more. If the consumer retries after a failure, the indexing side should not create inconsistent or duplicate records.

Fourth, metadata matters more. In a classic consumer, some MQ metadata is mainly operational. In a RAG consumer, that same metadata can become retrieval filters, provenance markers, grouping keys, or audit context later. That means the ingestion service should explicitly map useful MQ metadata into the downstream index and retrieval model rather than leaving it buried in the source message alone.

So the clean answer is this:

The MQ consumer is not interacting with MQ in some brand-new AI-specific way. It is still an MQ consumer. But it sits in a more dependency-heavy architecture, and that changes how carefully it must handle commits, retries, metadata, and downstream consistency.

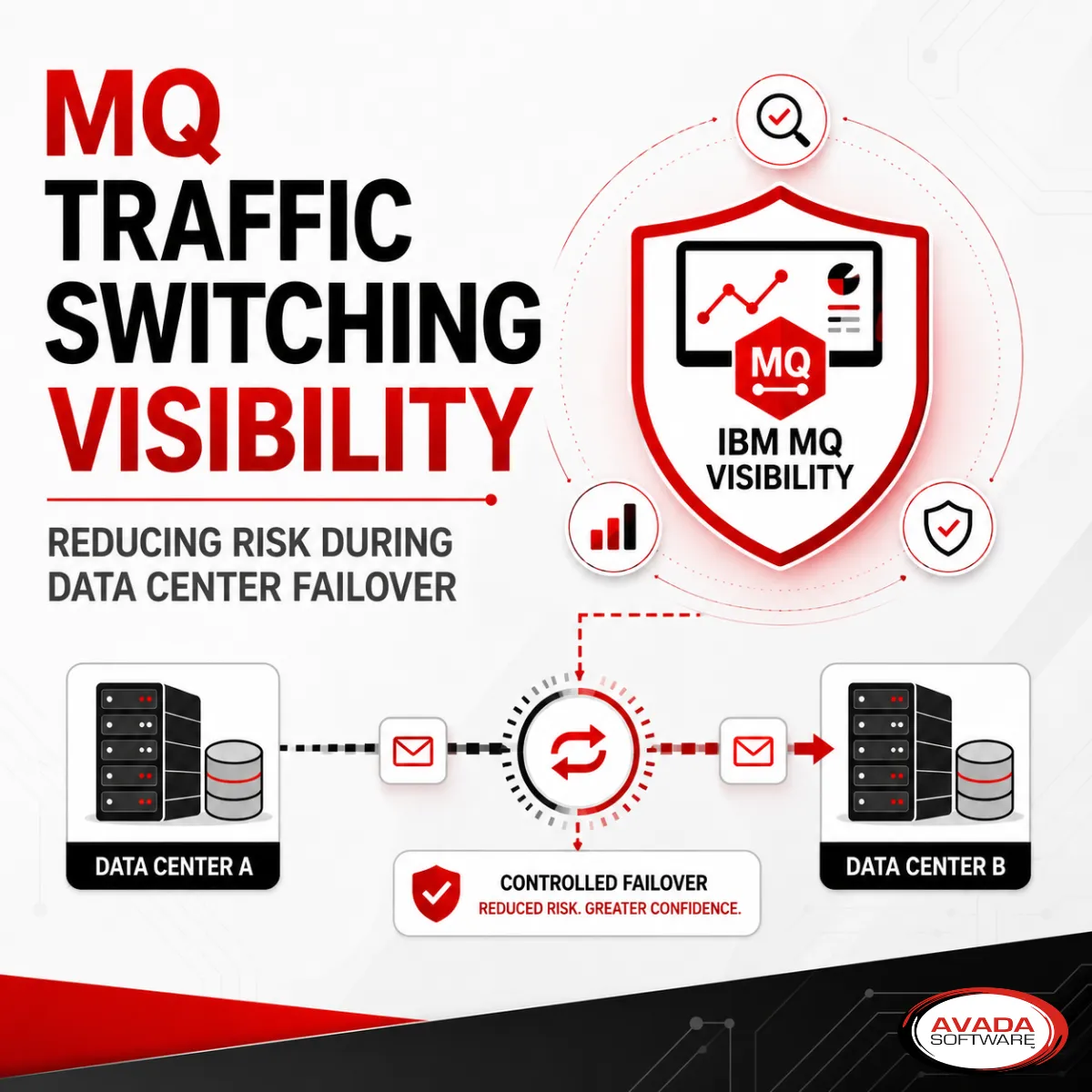

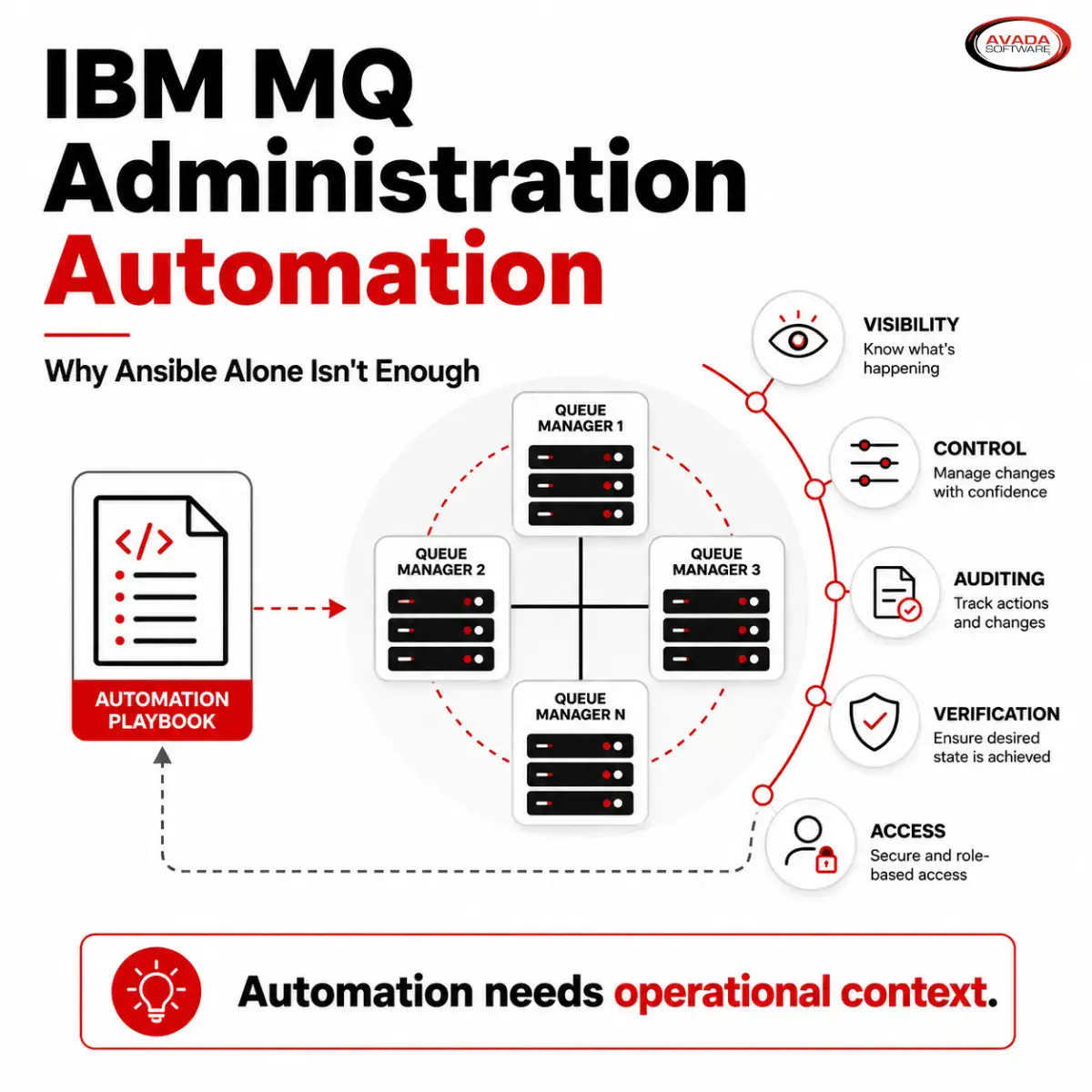

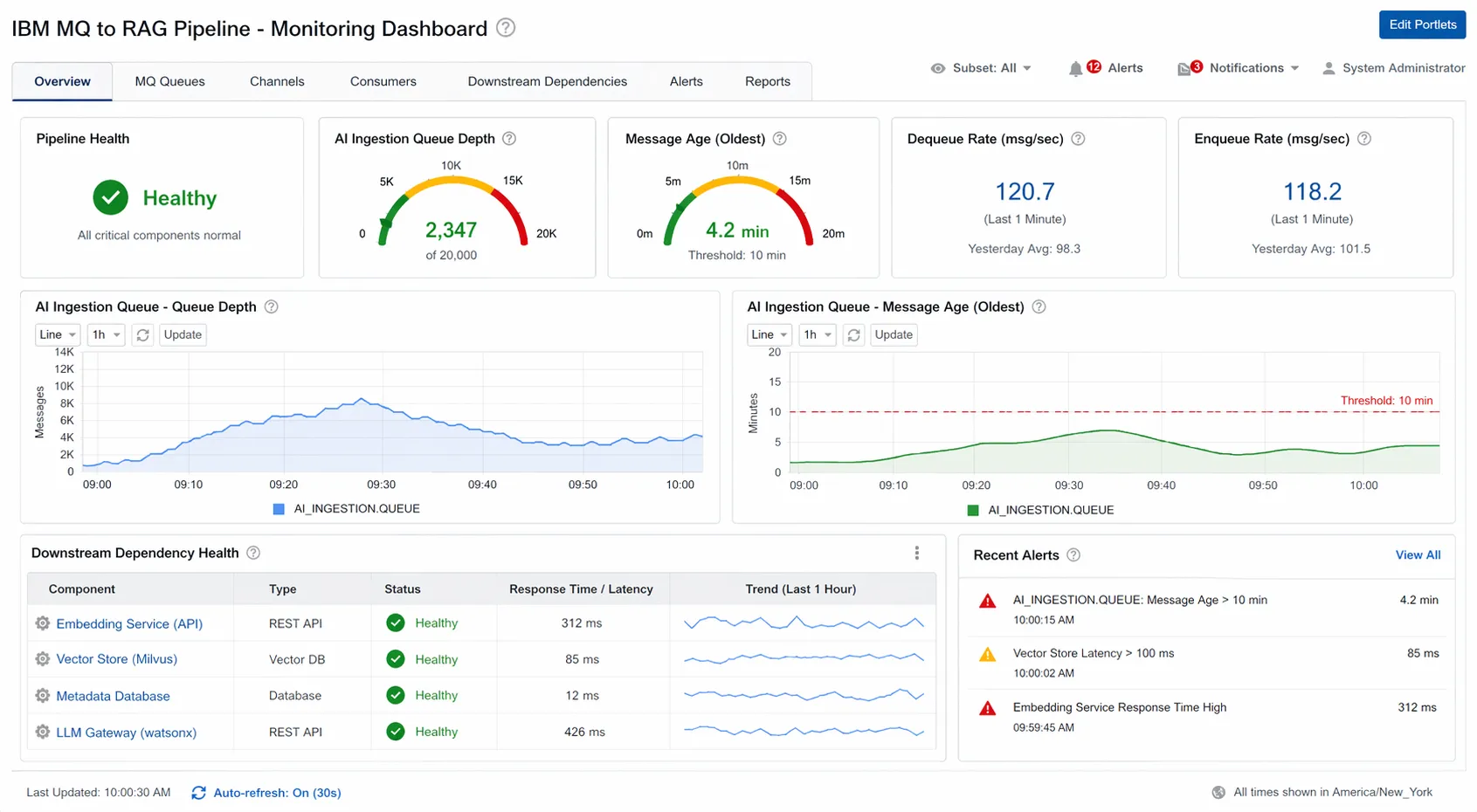

Monitoring IBM MQ when it feeds AI and RAG

This is where the conversation becomes operationally serious.

When MQ feeds a RAG pipeline, the basic MQ monitoring principles do not disappear. Queue managers are still queue managers. Queues are still queues. Channels are still channels. You still care about message buildup, consumption lag, status changes, and resource health.

But the blast radius expands.

A traditional MQ consumer problem is often contained within the messaging and application path. An MQ-to-RAG consumer can fail because of messaging issues, but it can also fail because the embeddings service is slow, the vector store is unreachable, the indexing job is stuck, the content transformation step is choking on unexpected payload structure, or the retrieval layer is returning poor results.

That means “Is MQ up?” is no longer enough.

An MQ-to-RAG pipeline needs more than basic “MQ is up” monitoring. A dashboard view can help teams track queue depth, message age, traffic rates, downstream dependency health, and alerts together so they can spot ingestion slowdowns before they affect retrieval or user-facing AI response

Download Proactive vs. Forensic – Middleware Health Monitoring to see how real-time, proactive monitoring helps teams catch queue backlogs, dependency slowdowns, and transaction issues before users feel the impact.

What should still be monitored on the MQ side

The familiar MQ indicators remain essential:

- queue depth

- message age

- enqueue and dequeue rates

- last get and last put activity

- uncommitted messages

- backout patterns

- queue manager health

- channel health and status

None of that becomes less important just because the downstream system is AI-related. In fact, message age often becomes even more useful because a backlog in an AI ingestion queue can hide behind apparently healthy upstream traffic.

A burst of support events arriving on schedule does not tell you whether the downstream knowledge pipeline is keeping up.

What changes in an MQ-to-RAG flow

The difference is that you now need to correlate MQ health with non-MQ dependencies.

For example:

- queue depth is rising because the ingestion consumer is not keeping up

- dequeue rate is steady, but indexing failures mean content never becomes retrievable

- MQ traffic looks healthy, but a downstream vector store is slow

- the consumer is alive, but a change in payload structure is causing chunking failures

- embeddings calls are timing out

- retrieval quality is degrading because the wrong fields are being indexed

That is why the best monitoring view for an MQ-to-RAG architecture is not MQ-only. It is end-to-end.

This is where Infrared360 fits naturally into the story. Its value here is not that MQ suddenly needs a special AI monitor. Its value is that teams need visibility into the MQ layer and the surrounding dependencies that determine whether the pipeline is actually working. In practical terms, that means watching the AI ingestion queue and its downstream dependencies together rather than in separate operational silos.

That is a strong fit for a monitoring strategy built around real-time visibility, cross-environment coverage, compound alerts, and shared operational context.

What alerts make sense in this pattern

Simple threshold alerts are rarely enough on their own.

In an MQ-to-RAG design, stronger alerts often combine conditions, such as:

- queue depth high and dequeue rate low

- message age rising and no recent gets

- consumer running but downstream dependency unavailable

- indexing exceptions increasing after a deployment

- queue draining normally but vector-store updates falling behind

Those are the kinds of conditions that help operations teams distinguish between a temporary burst and a pipeline problem that is about to affect users.

Testing MQ-to-RAG pipelines

Testing is the other section where this topic becomes especially practical.

In a traditional integration flow, a team might consider the test successful if a message was placed on the queue, consumed by the application, and processed without error.

That is not enough here.

When IBM MQ is used as the ingestion layer for a RAG pipeline, testing should separate MQ-side readiness from downstream AI quality. Those are related, but they are not the same thing.

1. MQ path testing

At the MQ layer, the team still needs to verify the basics:

- messages land on the correct source queue

- streamed or copied messages reach the intended AI ingestion queue

- message counts and timing behave as expected

- retries and backouts do not create obvious operational problems

- and, where duplicated feeds are used, the MQ-side design supports the required level of completeness

This is classic middleware testing, and it should not be skipped just because the project is branded as AI.

2. Payload realism testing

A synthetic “hello world” message proves almost nothing in an MQ ingestion path.

Real test traffic should vary in ways that reflect what the MQ environment will actually carry, including:

- message size

- optional fields

- content structure

- character encoding

- headers

- JMS properties where applicable

- XML, JSON, text, or mixed payload patterns

- duplicate or replay scenarios

- malformed but realistic edge cases

That matters because message-handling behavior often depends on structure, headers, and properties just as much as on the body of the message itself.

3. Routing and target confirmation

It is not enough to confirm that a message was generated.

Teams should also confirm that messages sent for testing are received at the intended MQ target, especially when using copied or streamed feeds for AI ingestion. In this kind of design, routing validation helps prove that the synthetic traffic was sent over the intended MQ path and arrived where expected.

If completeness matters, this is also where the team should validate that the chosen duplication approach is aligned with the business requirement for not missing ingestion events.

4. Volume, rate, and burst testing

This is especially important in MQ-to-RAG architectures.

The issue is often not whether a single message can be generated and delivered. The more important question is whether the MQ-side ingestion path behaves as expected when message traffic spikes. That is where queue buildup, rising message age, timing problems, or unexpected message-count effects may appear.

For that reason, testing should include planned scenarios for:

- controlled message counts

- repeated execution

- rate-based runs

- burst-volume runs

- scheduled readiness checks

- repeatable regression tests after change

5. Separate downstream quality testing

Beyond the MQ layer, teams may also want to test downstream ingestion, indexing, retrieval, and answer quality. Those are valid parts of a broader MQ-to-RAG test strategy.

But they answer different questions.

Proving that realistic messages can be generated, routed correctly, and delivered at the right rate or volume is not the same thing as proving that a downstream knowledge assistant will return the right answer. The MQ layer can be tested well while retrieval quality still needs separate review and tuning.

Where Infrared360 fits in testing

Infrared360’s synthetic transaction capabilities are well suited to the MQ side of this kind of design because they help teams create repeatable IBM MQ message test cases rather than relying on one-off manual tests. Message Test Cases are designed to send simple or complex messages to queues or topics, using MQI, JMS, or both, with configurable message counts, header data sets, JMS properties, content resources, static targets, queue-clearing options, audit history, notifications, and scheduled execution.

That makes Infrared360 particularly useful for several practical testing needs:

First, it supports repeatable message-generation tests rather than single-use checks.

Second, it supports realistic payload testing by allowing teams to define the content of generated messages, apply header data sets, and include JMS properties where needed.

Third, it supports message-count, repeat, interval, and schedule-based testing. That matters when teams want to run controlled bursts, recurring readiness checks, or repeated regression tests rather than isolated manual sends.

Fourth, it supports target-based MQ routing validation by letting teams send generated messages to defined queues or topics and confirm that those test messages are received where intended. That is especially useful when validating AI ingestion feeds that depend on copied or streamed MQ traffic.

Fifth, it supports operational discipline through audit history and notifications, which helps teams track when tests ran and whether recurring test cases are being executed as planned.

Taken together, that makes Infrared360 especially useful for proving the MQ side of this design with realistic, repeatable synthetic transactions for message flow, payload realism, routing confirmation, scheduling, and burst-volume readiness.

Download the IBM MQ Synthetic Transaction Testing Checklist to plan repeatable MQ message test cases for routing, payload realism, headers and JMS properties, scheduling, notifications, audit history, and burst-volume scenarios.

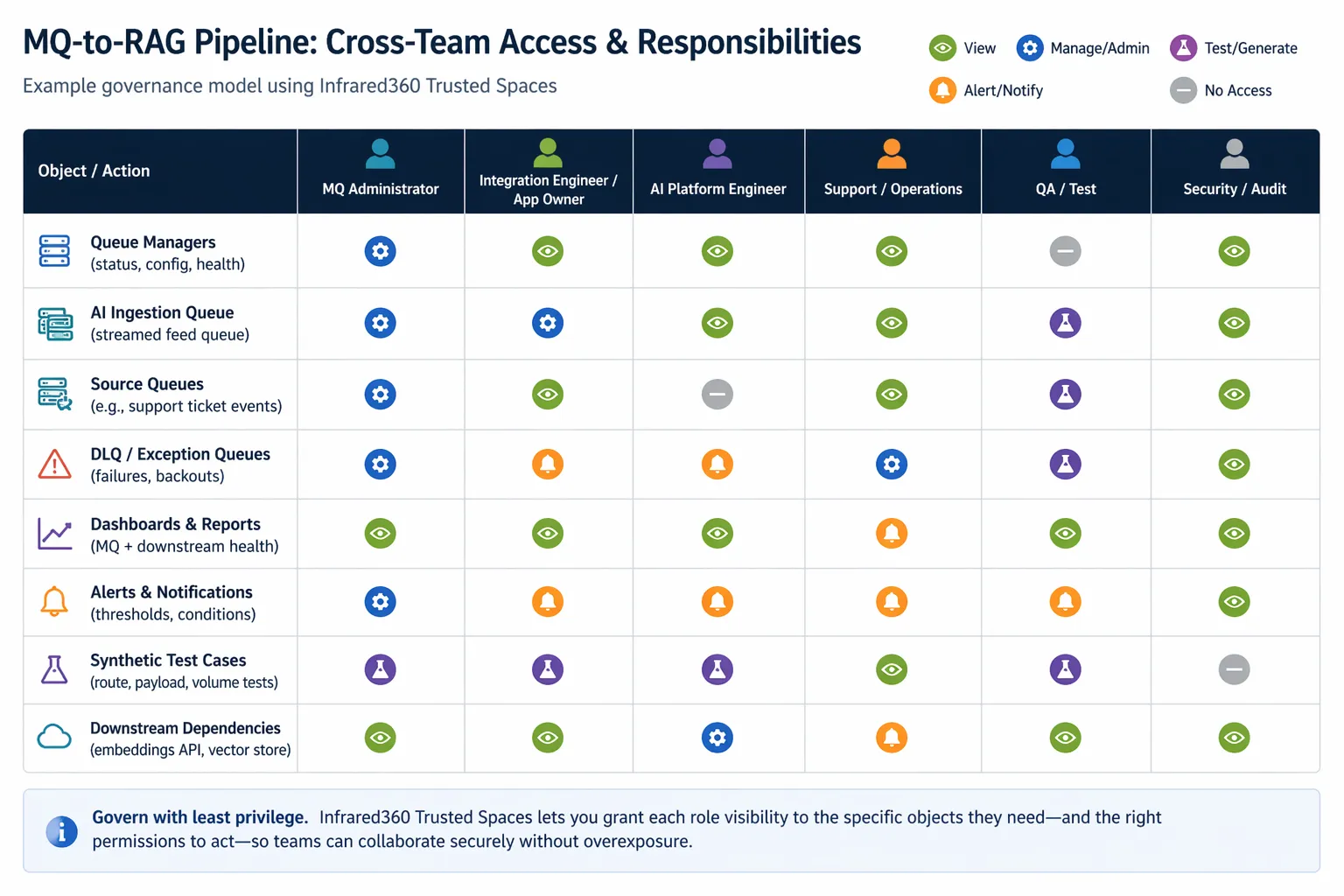

Governance and team boundaries

One of the less discussed realities of MQ-to-RAG architectures is that they cross team boundaries quickly.

The middleware team understands queueing, channels, and operational flow.

The application or integration team understands source payloads and business semantics.

The AI or platform team understands embeddings, vector indexes, and retrieval tuning.

The support or operations team needs enough visibility to recognize incidents and respond quickly.

That is why governance matters.

An organization does not want to solve a cross-team observability problem by simply giving every user broad access to everything. At the same time, people outside the core MQ admin group may need visibility into queues, flows, tests, alerts, or selected actions in order to support the service effectively.

Infrared360’s Trusted Spaces™ approach maps well to this kind of environment because it supports delegated visibility and controlled access instead of all-or-nothing access. In an MQ-to-RAG design, that can be useful when operations, development, integration, and support teams need to collaborate on the same pipeline without all needing unrestricted authority across the entire middleware estate.

That is not just a security point. It is a productivity point.

This governance chart illustrates how different teams can share responsibility for an MQ-to-RAG pipeline without giving everyone full access. Using a least-privilege model such as Infrared360 Trusted Spaces, organizations can give each role the visibility and actions they need across queues, alerts, tests, dashboards, and downstream dependencies while maintaining control and reducing overexposure.

Download a template to create and store a documented access model. Turn it into team runbooks, review checkpoints, and use to set up Trusted Spaces™ in Infrared360.

The operational takeaway

The most useful way to think about IBM MQ in this context is simple:

IBM MQ is not the intelligence. It is the dependable path that helps the intelligence get the right business context.

That makes IBM MQ highly relevant to AI and RAG projects, especially in enterprises where the most important business content is already moving through MQ.

But that relevance comes with a responsibility.

Once MQ feeds a downstream RAG pipeline, the organization must think beyond message transport alone. It must think about ingestion design, metadata preservation, queue separation, downstream dependency health, retrieval quality, and realistic testing.

That is why the operations side of the story matters so much.

A successful MQ-to-RAG project is not only about getting messages to a consumer. It is about making sure the full path stays observable, testable, supportable, and trustworthy over time.

That is also why the monitoring and testing sections should not be side notes in the strategy. They should be central.

And that is where Infrared360 has a natural role to play: helping teams monitor the end-to-end path more effectively and validate it with realistic synthetic transactions, including repeatable regression, routing, and burst-volume testing before problems surface in production.

FAQ

Can IBM MQ be used with AI applications?

Yes. IBM MQ can serve as a reliable ingestion and decoupling layer for AI applications by moving business events and records from source systems to downstream ingestion services, vector pipelines, and related processing components.1 2

Is IBM MQ itself a RAG engine?

No. IBM MQ is messaging middleware, not the vector store, retriever, or language model. In a RAG architecture, MQ moves the source content to downstream services that preprocess, embed, store, retrieve, and ground responses.1 2 4

What is a good MQ-to-RAG use case?

Support tickets are a strong example. IBM explicitly identifies customer support tickets in a content management system as a valid knowledge-base source for RAG.3

Should the AI pipeline consume from the same MQ queue as the production application?

Usually, a separate feed is better. IBM MQ streaming queues can create a duplicate copy of each message on a second queue, and IBM notes that the queue manager performs the streaming so the original applications are generally unaware of it.8 9

Is the MQ consumer different in a RAG architecture?

From MQ’s perspective, not fundamentally. It is still a consumer that gets messages and manages commits or backouts. What changes is the surrounding architecture, including downstream indexing work, metadata handling, retries, and consistency requirements.13 14 15

What should you monitor when MQ feeds a RAG pipeline?

You should still monitor classic MQ indicators such as queue depth, message age, dequeue behavior, channel health, and consumer activity. But you should also correlate those with downstream dependencies such as ingestion services, embeddings calls, vector-index updates, and retrieval quality checks.1 2 5

Why is message metadata important in MQ-to-RAG designs?

Because message properties and MQMD control information can become useful indexing, provenance, and retrieval-filter data later in the pipeline.6 7

Are synthetic transactions useful for MQ-to-AI pipelines?

Yes. They are especially useful for validating routing, payload realism, headers and properties, recurring canary tests, regression testing after changes, and burst or high-volume behavior. That is especially important in MQ-to-RAG pipelines, where downstream parsing, enrichment, embedding, and indexing services may become bottlenecks before MQ itself does. Synthetic transactions help prove that the MQ-side ingestion path works under realistic conditions, not just ideal conditions.

Do synthetic transactions prove that the assistant will answer well?

Not by themselves. They are excellent for validating MQ-side transport and ingestion readiness, but teams should still test retrieval quality, chunking strategy, search settings, and grounded answer quality separately.5 10 16

What should teams tune if retrieval quality is weak?

IBM recommends testing the vector index, adjusting search settings, and reviewing chunk size, overlap, and the number of search results included as context when answers are poor or incomplete.5 16

TL;DR

IBM MQ can play a valuable role in AI and RAG architectures — not as the model, retriever, or vector store, but as the reliable ingestion and decoupling layer that moves business events and records into downstream AI pipelines. To learn more see this section in the post.

That is especially useful in enterprises that already run critical workflows through MQ. Rather than forcing source systems to integrate directly with AI infrastructure, teams can use IBM MQ to feed a dedicated ingestion path where content is cleaned up, metadata is preserved, embeddings are generated, and a vector index supports retrieval. A practical example is support tickets on MQ feeding an internal knowledge assistant. To learn more, see this section.

But the real challenge is not just the architecture. It is the operational model around it. Once MQ feeds a RAG pipeline, teams need to think carefully about monitoring, testing, and cross-team governance. For more, see those sections, starting here.

On the monitoring side, classic MQ indicators such as queue depth, message age, dequeue rates, and channel health still matter — but they are no longer enough by themselves. Teams also need visibility into the downstream dependencies that determine whether the pipeline is actually working. That makes end-to-end operational visibility much more important than an MQ-only view. See the monitoring section for more details.

On the testing side, success is not just “a message was sent.” Teams should validate realistic payloads, headers and properties, routing to the intended MQ targets, duplication behavior, and burst-volume scenarios. Repeatable synthetic transaction testing is especially useful here because it helps prove the MQ side of the design before changes or load spikes affect production. See the Testing section for detailed information.

On the governance side, MQ-to-RAG architectures cross team boundaries quickly. Middleware, integration, AI/platform, and operations teams all need the right visibility into the same pipeline — but not the same level of access. That is where delegated visibility and controlled permissions become important. For more, see the Governance section.

Take the next step

Now that you understand where IBM MQ fits in an AI or RAG architecture, the next step is understanding what it takes to run that kind of environment well — especially when it comes to monitoring, operational resilience, automation, and secure collaboration across teams:

Download Empowering Infrastructure Architects: Ensuring Resilience in Middleware Architectures for Modern Enterprises for practical guidance on building resilient middleware environments with real-time visibility, proactive monitoring, automation, and secure cross-team collaboration.

Endnotes

- IBM MQ 9.4 Quick Start Guide / IBM MQ overview. IBM describes MQ as facilitating the assured, secure, and reliable exchange of information between applications, systems, services, and files, and as robust, secure, reliable messaging middleware.

https://www.ibm.com/docs/en/ibm-mq/9.4.x?topic=mq-94-quick-start-guide - IBM watsonx RAG pattern documentation. IBM describes the RAG flow as preprocessing source content into plain text and embeddings, converting the question to an embedding, retrieving relevant passages, and supplying that context to the model.

https://www.ibm.com/docs/en/watsonx/saas?topic=solutions-retrieval-augmented-generation - IBM watsonx RAG knowledge-base examples. IBM includes customer support tickets in a content management system as an example knowledge source for RAG.

https://dataplatform.cloud.ibm.com/docs/content/wsj/analyze-data/fm-rag.html?audience=wdp&context=wx&locale=en - IBM watsonx vector embeddings and vector index guidance. IBM explains that embeddings are numerical representations used to find similar passages, and that vectors are stored in vector databases and retrieved with a vector index.

https://www.ibm.com/docs/en/watsonx/saas?topic=solutions-retrieval-augmented-generation - IBM vector index testing and tuning guidance. IBM documents submitting test queries to a vector index, adjusting search settings, and tuning Top K and related settings when answers are poor.

https://dataplatform.cloud.ibm.com/docs/content/wsj/analyze-data/fm-prompt-data-index-manage.html?context=wx - IBM MQ messages concept documentation. IBM states that an MQ message consists of application data and message properties.

https://www.ibm.com/docs/en/ibm-mq/9.2.x?topic=concepts-mq-messages - IBM MQMD documentation. IBM states that MQMD contains the control information that accompanies the application data when a message travels between sending and receiving applications.

https://www.ibm.com/docs/en/ibm-mq/9.2.x?topic=mqi-mqmd-message-descriptor - IBM MQ streaming queues. IBM documents streaming queues as creating a duplicate or near-identical copy of each message on a second queue.

https://www.ibm.com/docs/en/ibm-mq/9.4.x?topic=configuring-streaming-queues - IBM MQ streaming queue behavior. IBM states that streaming is performed by the queue manager rather than the application, and that the original producer and consumer are generally unaware of it.

https://www.ibm.com/docs/en/ibm-mq/9.3.x?topic=queues-streaming-configuration - IBM guidance on optimizing a RAG knowledge base. IBM recommends adapting knowledge-base content so it is more accessible to generative AI and more likely to produce higher-quality results.

https://www.ibm.com/docs/en/watsonx/saas?topic=generation-optimizing-your-rag-knowledge-base - IBM Milvus vector-store guidance. IBM documents Milvus as a supported vector-store option for watsonx-related RAG workflows.

https://www.ibm.com/docs/en/watsonx/w-and-w/2.1.0?topic=index-setting-up-milvus-vector-store - IBM Elasticsearch vector-store guidance. IBM documents Elasticsearch as a search and analytics engine with vector-store capabilities and notes its durable, scalable architecture.

https://www.ibm.com/docs/en/watsonx/saas?topic=autoai-choosing-vector-store-rag-experiment - IBM MQ Java and JMS/Jakarta Messaging documentation. IBM documents IBM MQ classes for JMS and Jakarta Messaging and describes them as the Java messaging providers supplied with IBM MQ.

https://www.ibm.com/docs/en/ibm-mq/9.3.x?topic=interfaces-mq-classes-jmsjakarta-messaging - IBM MQ syncpoint considerations. IBM documents that IBM MQ operations can participate in units of work and transaction coordination.

https://www.ibm.com/docs/en/ibm-mq/9.2.x?topic=work-syncpoint-considerations-in-mq-applications - IBM MQ commit and backout behavior. IBM documents commit, backout, syncpoint, and unit-of-work behavior for recoverable MQ get and put operations.

https://www.ibm.com/docs/en/ibm-mq/9.2.x?topic=queuing-committing-backing-out-units-work - IBM guidance on chunking and retrieval settings. IBM notes that when answers are missing or incomplete, teams should review chunk size, overlap, and search-result settings.

https://dataplatform.cloud.ibm.com/docs/content/wsj/analyze-data/fm-prompt-data-index-create.html?audience=wdp&context=wx

More Infrared360® Resources