How the IBM Confluent Acquisition Changes MQ and Kafka in 2026

Imagine you are an enterprise architect building a modern data system. You need to safely move highly sensitive financial transactions. You also need to stream millions of user clicks to your analytics dashboard in real time.

For a decade, IT directors fiercely debated the best way to handle this. Many thought they had to prioritize one approach. You chose either the strict transactional precision of IBM MQ or the high-throughput streaming capabilities of Confluent Kafka 4 5. The reality was that both approaches complimented each other combining guaranteed, transactional message delivery (MQ) with high-throughput, scalable event streaming (Kafka) 5. But this still left the issue of operational fragmentation, where architects had to manage divided technology estates and build fragile third-party connections just to keep their data pipelines synchronized 2.

IBM recently finalized an $11 billion acquisition of Confluent 1. Overnight, the narrative shifted. You now have mission-critical transactions and analytical telemetry living in the same world, making it easier to create a single, trusted foundation for enterprise-wide intelligence.. The enterprise technology landscape has entered a new era of unified teamwork under IBM’s consolidated Smart Data Platform 3.

Bringing these massive technologies together presents some amazing opportunities for enterprises. However, this consolidation brings concerns from existing Confluent customers about strategic implications 10. Together, these technologies can help organizations marry real-time insight to trusted execution. Yet, some Confluent users are concerned about total ecosystem vendor lock-in, the potential of licensing overhauls, and critical monitoring blind spots 10.

Let us break down what this actually means for your daily operations, how MQ and Kafka function together today, and how to protect your infrastructure in 2026.

The Historical Dichotomy: Queueing vs. Streaming

Let us look back at why enterprise architects had to manage two completely different systems in the first place. For over a decade, you faced a strict divide between precision message queueing and high-volume event streaming 12. These two platforms were built with fundamentally opposing design philosophies. This forced organizations to manage heavily siloed data operations.

The IBM MQ Architectural Foundation

IBM MQ operates like the digital equivalent of certified mail. Introduced over thirty years ago, it solves a highly complex problem. It provides the reliable and asynchronous exchange of data across distributed enterprise systems.

- Precision and ACID Compliance: IBM MQ primarily operates on a point-to-point architecture. This design places an absolute premium on message durability and strict chronological ordering 4.

- Transactional Integrity: It provides exceptionally strong transaction support with full ACID compliance.

- The Destructive Read Paradigm: In its most common transactional implementation, MQ uses a destructive read paradigm where the system deletes data from the queue once a consumer application successfully confirms receipt. While MQ supports non-destructive message browsing, the destructive model is what ensures the system remains “clean” and highly efficient for financial workflows.

- Core Use Cases: This architectural design guarantees exactly-once delivery natively. Because it is designed to prevent a single message from being processed twice or lost in transit,, MQ remains the undisputed standard for financial clearinghouses and payment gateways.

The Confluent Kafka Architectural Foundation

If MQ is certified mail, Kafka and Confluent are a live radio broadcast 5. Kafka was built for a continuous firehose of data.

- Volume and Replayability: Kafka employs a distributed publish-subscribe model. Kafka does not destroy messages after reading. Instead, it functions as a persistent and distributed commit log. Events are appended sequentially and stored on disk. Multiple consumer groups can read from the exact same topic simultaneously. If an application crashes, it can simply replay the data from the past 14.

- Horizontal Scalability: Engineered for massive throughput, Kafka scales horizontally across distributed brokers to process millions of data points per second.

- Core Use Cases: Kafka dominates in scenarios requiring high-volume data ingestion. This makes it perfect for real-time user analytics, IoT telemetry, and fraud detection algorithms.

The Traditional Integration Challenge: Managing the Gap

Before the 2026 acquisition, using both IBM MQ and Kafka was common, but it required significant architectural heavy lifting. While connectivity was possible, the “Old Way” often resulted in an operational burden for enterprise architects.

- Fragile Connectivity: Integrating these two distinct architectures often relied on complex third-party connections or manually configured bridges. While IBM provided native tools like Kafka Connect and Streaming Queues, these were often treated as “bolted-on” solutions rather than a unified data fabric.

- Divided Technology Estates: Organizations were forced to manage heavily siloed data operations. This led to rapid infrastructure sprawl, as teams had to maintain separate security protocols, support paths, and expertise for each platform.

- Fragmented Data Governance: Keeping pipelines synchronized without delays created immense operational overhead. There was no single, trusted foundation for enterprise-wide intelligence, as mission-critical transactions in MQ and analytical telemetry in Kafka remained strategically disconnected.

The March 2026 acquisition aims to replace this era of “management by workaround” with native synergy under the Smart Data Platform.

The Post-Acquisition Reality: IBM’s Unified Smart Data Platform

The March 2026 acquisition permanently transitions the relationship between IBM MQ and Confluent Kafka from a model of “competitive coexistence” to one of native synergy 7, 9. IBM is now actively merging Confluent’s cloud-native streaming fabric into its own core enterprise pillars to create what it calls the Smart Data Platform 3.

This integration operates across multiple architectural layers, embedding Confluent directly into the IBM Z mainframe ecosystem, IBM webMethods, and the watsonx.data artificial intelligence platform 25 27 30. The result is a unified data foundation where static transactional data and continuously flowing telemetry operate together flawlessly 2.

How MQ and Kafka Work Together Now

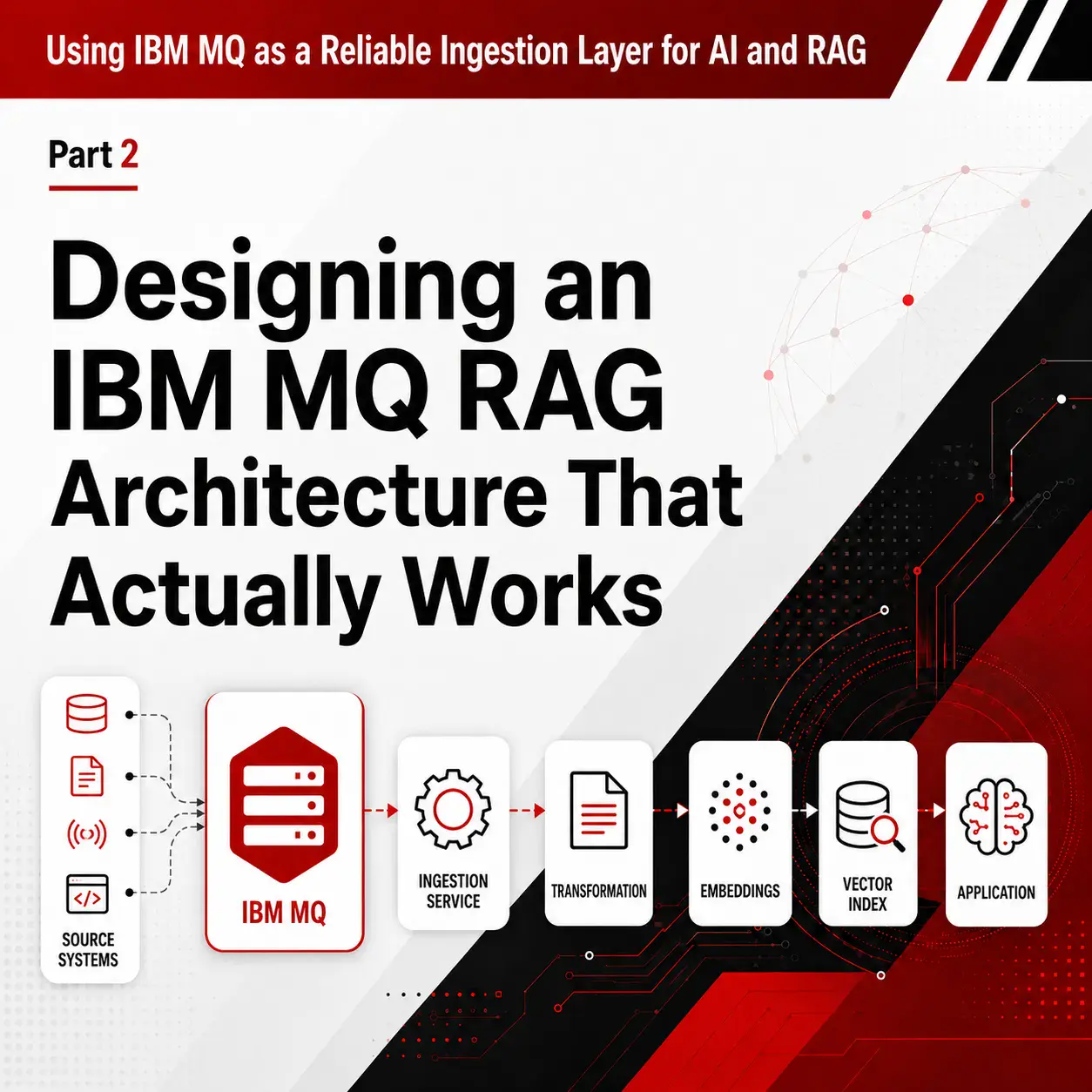

Enterprise architects no longer have to build custom bridges to unify their messaging environments. The new blueprint relies on a consolidated pipeline where both technologies play strictly complementary roles.

- MQ acts as the Transactional System of Record: IBM MQ continues to handle the destructive-read delivery for mission-critical business transactions. It sits at the absolute edge of core applications to capture the financial event with unwavering compliance 2.

- Kafka acts as the Real-Time Analytical Nervous System: Those MQ transactions no longer stop at the application layer. They are now ingested natively and securely into the Confluent streaming fabric 15.

- Fueling AI and Insights: Kafka takes the regulated transactional data, combines it with operational telemetry, and distributes it horizontally. This fuels real-time analytics and generative AI models inside watsonx.data 8.

In this new reality, you capture the transaction in MQ and instantly stream its context via IBM Confluent. This creates a single trusted foundation for enterprise-wide intelligence.

Strategic Implications for Enterprise Architecture

The technical case for bringing IBM MQ and Confluent Kafka closer together is easy to understand. IBM has been clear that the acquisition is about combining event streaming with its strengths in data management, integration, and enterprise infrastructure. The goal is a broader smart data platform for real-time, AI-ready data 1 3 7.

But for enterprise architects, the more important question is not whether the vision is compelling. It is how that vision changes platform strategy, operating assumptions, and long-term dependency choices.

The most balanced way to think about the strategic implications is this. The acquisition does not automatically create a problem, but it does create a new set of questions. Architects should distinguish between what IBM has explicitly announced and what customers reasonably may want to monitor over time. IBM has announced the direction of the platform. It has not announced every downstream commercial, operational, or roadmap decision that might matter to current Confluent users.

Licensing and Commercial Model: A Concern to Watch

One area worth watching is commercial packaging and pricing. Some analysts and platform teams have raised concerns that IBM Confluent customers could eventually face changes in how services are packaged or priced 10. That concern is understandable. IBM uses Virtual Processor Core (VPC) as a licensing metric across parts of its software portfolio 36.

At the same time, IBM currently lists Confluent Cloud as an XaaS offering. This means it would be premature to state that Confluent customers are definitely headed for a VPC-style pricing model 37.

The prudent message here is not that pricing upheaval is inevitable. It is that enterprise teams should understand their current exposure. If your organization depends heavily on Confluent-specific managed services or premium platform capabilities, it makes sense to track future announcements closely. You must model what different pricing scenarios could mean for your environment.

Roadmap and Platform Dependency

It is also reasonable to ask how the roadmap may evolve after such a large acquisition. IBM’s public rationale for the deal centers on integrating Confluent with IBM’s broader data, integration, and infrastructure portfolio 1 3 7. That could be positive for customers who want tighter alignment between event streaming and the rest of the IBM stack. But it also means existing customers should take a fresh look at where they rely on specific operational features, managed connectors, governance tools, or cloud workflows 10.

Importantly, this does not mean Apache Kafka itself is suddenly at risk. Factor House argues that the open-source Kafka project is likely to remain healthy because multiple vendors and cloud providers have a stake in it 10. The more practical concern is dependency on the proprietary and operational layers around the platform. That is where customers may want better visibility into what is portable, what is not, and what would be hardest to unwind if strategy or tooling changed later.

Questions Enterprise Architects Should Be Asking Now

The acquisition does not mean teams need to migrate, panic, or redesign anything immediately. It does mean they should ask some disciplined questions now while they still have time to answer them calmly 10.

Useful questions include:

- Which Confluent-specific features are we using versus standard Kafka APIs?

- If we needed to shift providers later, what would actually break?

- How much of our CI/CD and data pipeline tooling depends on Confluent-specific services?

- Are our monitoring and operational workflows tightly coupled to provider-native tooling?

Those questions are not anti-IBM. They are good platform-governance questions in any post-acquisition environment.

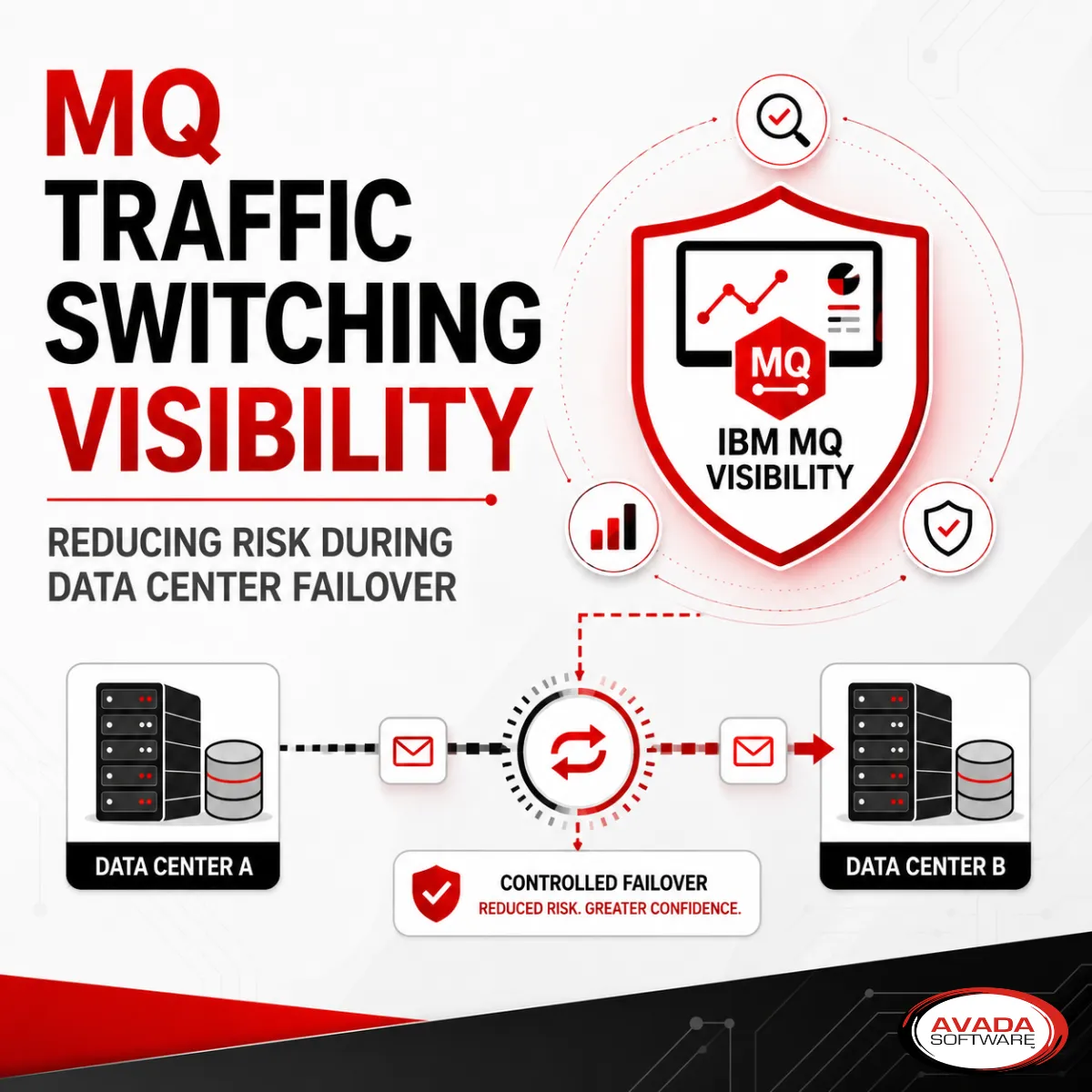

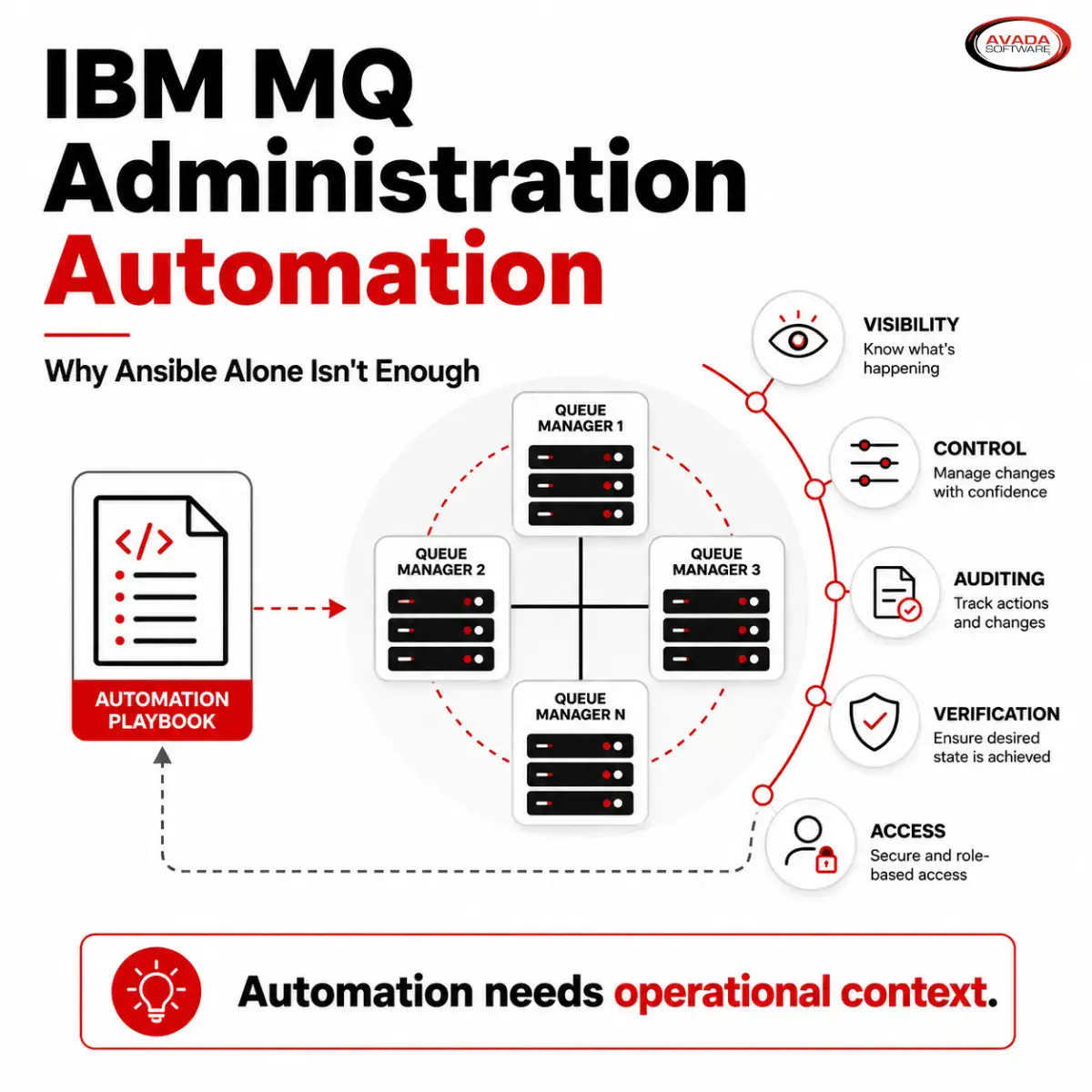

Why Independent Middleware Monitoring Is Critical

This is where the conversation becomes especially important for teams running both MQ and Confluent. Once mission-critical transactions, event streaming, integration flows, and downstream analytics begin to operate as one broader architecture, the real operational challenge becomes visibility.

IBM MQ exposes instrumentation events for errors, warnings, threshold breaches, and configuration changes so they can be incorporated into a system management application 38. In distributed systems more broadly, observability matters because issues are often difficult to reproduce locally. They are easy to miss when telemetry is scattered across separate tools 39.

That is why independent middleware monitoring deserves special attention here. When daily operational workflows are coupled to provider-specific tooling, that becomes both a technical dependency and a familiarity dependency 10. The issue is not that native vendor tools are inherently bad. The issue is that if your observability and operational habits are fully embedded inside the same ecosystem that provides the infrastructure, you may lose flexibility precisely when you need it most.

An independent monitoring layer can help teams answer questions that cut across product boundaries:

- Is the slowdown starting in MQ, in Confluent, or in the application between them?

- Is queue depth growing because downstream consumers are lagging?

- Is the issue transactional, streaming-related, or infrastructure-related?

- Are we seeing a platform problem, an application problem, or both?

Independent monitoring is not just about preference. It is about preserving operational clarity in an environment that is becoming more integrated, more distributed, and potentially more vendor-concentrated at the same time.

How Infrared360 Fits the Need

This is where Infrared360 fits naturally into the picture. Infrared360 is an agentless platform designed to monitor, manage, automate, and analyze middleware environments through a single interface. This includes environments that span IBM MQ and Kafka.

For organizations trying to manage both transactional messaging and event streaming together, cross-technology visibility is incredibly useful. It is much better than treating each platform as a separate operational island.

Infrared360 is built around the idea of reducing tool sprawl. It gives teams one place to monitor and manage multiple middleware technologies. Our platform emphasizes real-time monitoring, alerting, and operational control for both MQ and Kafka environments. Our Trusted Spaces model is specifically designed to provide role-based access. Administrators, engineers, developers, and support teams can each safely see the information and actions appropriate to their role.

An organization can approach MQ and Kafka as one operational problem without flattening the differences between them. MQ administrators can still focus on queue managers and transactional behavior. Kafka teams can still focus on broker health, throughput, topics, and lag. Leadership and operations teams can maintain a broader view of the end-to-end middleware environment rather than forcing everyone to work through disconnected tools and isolated dashboards.

As IBM brings more of the messaging and streaming stack under one strategic umbrella, many customers will want an operational layer of their own. Infrared360 is positioned to be that layer.

Learn More About Infrared360®

If your organization is planning for a future in which MQ and Kafka increasingly operate side by side, this is a good time to evaluate your monitoring approach. The more integrated the stack becomes, the more important it is to preserve clear and independent visibility across the whole flow.

To learn more about how Infrared360 can help you monitor and manage MQ and Kafka together, explore the product details or request a live demo from Avada Software.

Conclusion: Adapting Your Middleware Strategy for 2026

The IBM-Confluent deal does not erase the technical distinction between MQ and Kafka. IBM MQ still addresses one class of enterprise problem very well. Confluent still addresses another. What the acquisition changes is the strategic context. More enterprises will now evaluate them not only as different tools, but as complementary parts of the same broader data architecture 1 3 7.

That makes this a moment for clear thinking rather than alarm. Teams do not need to assume the worst. But they do need to understand where their dependencies sit. They must know how much of their tooling is provider-specific. They must ensure their observability model gives them enough independence to operate confidently as the landscape evolves.

The challenge is no longer just choosing between MQ and Kafka. It is making sure you can run both effectively, see across both clearly, and retain enough operational control to adapt as the platform strategy around them changes.

FAQs

Works Cited

- IBM Completes Acquisition of Confluent, Making Real Time Data the Engine of Enterprise AI and Agents https://newsroom.ibm.com/2026-03-17-ibm-completes-acquisition-of-confluent,-making-real-time-data-the-engine-of-enterprise-ai-and-agents

- IBM acquires Confluent to make AI work faster with live data — TFN – Tech Funding News, https://techfundingnews.com/ibm-acquires-confluent-to-make-ai-work-faster-with-live-data/

- IBM to Acquire Confluent to Create Smart Data Platform for Enterprise Generative AI, accessed https://newsroom.ibm.com/2025-12-08-ibm-to-acquire-confluent-to-create-smart-data-platform-for-enterprise-generative-ai

- IBM MQ vs. Kafka vs. ActiveMQ: Comparing Message Brokers | OpenLogic https://www.openlogic.com/blog/ibm-mq-vs-kafka-vs-activemq

- Kafka vs. IBM MQ: Key Differences and Comparative Analysis – GitHub https://github.com/AutoMQ/automq/wiki/Kafka-vs.-IBM-MQ:-Key-Differences-and-Comparative-Analysis

- Confluent Cloud Q1 2026: Queues for Kafka, KCP for migration, https://www.confluent.io/blog/2026-q1-confluent-cloud-launch/

- IBM and Confluent announce ability to connect, process and govern real-time data for applications and AI agents, https://www.ibm.com/new/announcements/ibm-and-confluent-announce-ability-to-connect-process-and-govern-real-time-data-for-applications-and-ai-agents

- IBM’s Confluent Acquisition Is About Event-Driven AI – The New Stack, https://thenewstack.io/ibms-confluent-acquisition-is-about-event-driven-ai/

- IBM Completes Acquisition of Confluent, Boosting Real-Time Data for the Organization, https://www.dbta.com/Editorial/News-Flashes/IBM-Completes-Acquisition-of-Confluent-Boosting-Real-Time-Data-for-the-Organization-173970.aspx

- What the IBM Confluent acquisition means for Kafka users | Factor House, https://factorhouse.io/articles/what-the-ibm-confluent-acquisition-means-for-kafka-users

- IBM Event Automation Reviews & Ratings 2026 | Gartner Peer Insights, https://www.gartner.com/reviews/product/ibm-event-automation

- The winning combination for real-time insights: Messaging and … – IBM, https://www.ibm.com/new/product-blog/the-winning-combination-for-real-time-insights-messaging-and-event-driven-architecture

- Compare Apache Kafka vs IBM MQ 2026 | TrustRadius, https://www.trustradius.com/compare-products/apache-kafka-vs-ibm-mq

- Compare Confluent vs IBM MQ 2026 | TrustRadius, accessed April 8, 2026, https://www.trustradius.com/compare-products/confluent-io-vs-ibm-mq

- Confluent, an IBM Company, https://www.ibm.com/products/confluent

- Connect on z/OS for Confluent Platform, accessed April 8, 2026, https://docs.confluent.io/platform/current/connect/connect-zos.html

- IBM Completes Acquisition of Confluent, Making Real Time Data the Engine of Enterprise AI and Agents, https://www.confluent.io/press-release/ibm-completes-acquisition-of-confluent/

- Confluent Recognized in 2025 Gartner® Magic Quadrant™ for Data Integration Tools, https://www.confluent.io/blog/confluent-recognized-for-data-integration/

- IBM watsonx.data integration, https://www.ibm.com/products/watsonx-data-integration

- IBM Acquires Confluent: What It Means for Your Kafka Stack – Scalytics, https://www.scalytics.io/en-us/blog/ibm-confluent-acquisition-kafka-architecture-2026

- IBM is a Leader in seven AI-related Gartner® Magic Quadrant™ reports in 2025 and 2026, https://www.ibm.com/new/announcements/ibm-is-a-leader-in-seven-ai-related-gartner-magic-quadrant-reports-in-2025-and-2026

- IBM watsonx.data intelligence, https://www.ibm.com/products/watsonx-data-intelligence

- IBM to Acquire Confluent, https://www.confluent.io/blog/ibm-to-acquire-confluent/

- IBM Event Automation, https://www.ibm.com/products/event-automation

- IBM Z Digital Integration Hub overview, https://www.ibm.com/docs/en/zdih/2.1.x?topic=z-digital-integration-hub-overview

- Confluent on IBM zSystems: the leading event streaming solution for your application modernization strategy, https://www.confluent.io/partner/ibm/

- IBM Z Digital Integration Hub, https://www.ibm.com/products/z-digital-integration-hub

- IBM zDIH solution components, https://www.ibm.com/docs/en/zdih/2.1.x?topic=overview-zdih-solution-components

- IBM Z Content Solutions | Z Digital Integration Hub, https://www.ibm.com/support/z-content-solutions/z-digital-integration-hub/

- IBM watsonx.data, https://www.ibm.com/products/watsonx-data

- Confluent Blog, https://www.confluent.io/blog/confluent-intelligence-for-ai/

- Streaming Agents with Confluent Intelligence in Confluent Cloud, https://docs.confluent.io/cloud/current/ai/streaming-agents/overview.html

- Confluent vs IBM 2026 | Gartner Peer Insights, https://www.gartner.com/reviews/market/event-stream-processing/compare/confluent-vs-ibm

- IBM watsonx.data integration, https://www.ibm.com/docs/en/software-hub/5.3.x?topic=services-watsonxdata-integration

- Data integration in the age of multi-agent architectures | IBM, https://www.ibm.com/think/perspectives/data-integration-multi-agent-architectures

- IBM Documentation, “Virtual processor core (VPC)”

https://www.ibm.com/docs/en/license-metric-tool/9.2.0?topic=metrics-virtual-processor-core-vpc - IBM Support, “IBM Confluent Cloud_XaaS”

https://www.ibm.com/support/pages/ibm-confluent-cloudxaas - IBM Documentation, “Using IBM MQ events”

https://www.ibm.com/docs/en/ibm-mq/9.2.x?topic=zos-using-mq-events - OpenTelemetry, “Observability primer”

https://opentelemetry.io/docs/concepts/observability-primer/

Engage with Infrared360!

Take the first step towards optimizing your messaging environment and ensuring your infrastructure’s health and efficiency.

More Infrared360® Resources