This is Part 1 of a 6-part series exploring how to use IBM MQ as a reliable ingestion layer for AI and RAG.

For the full article, click here

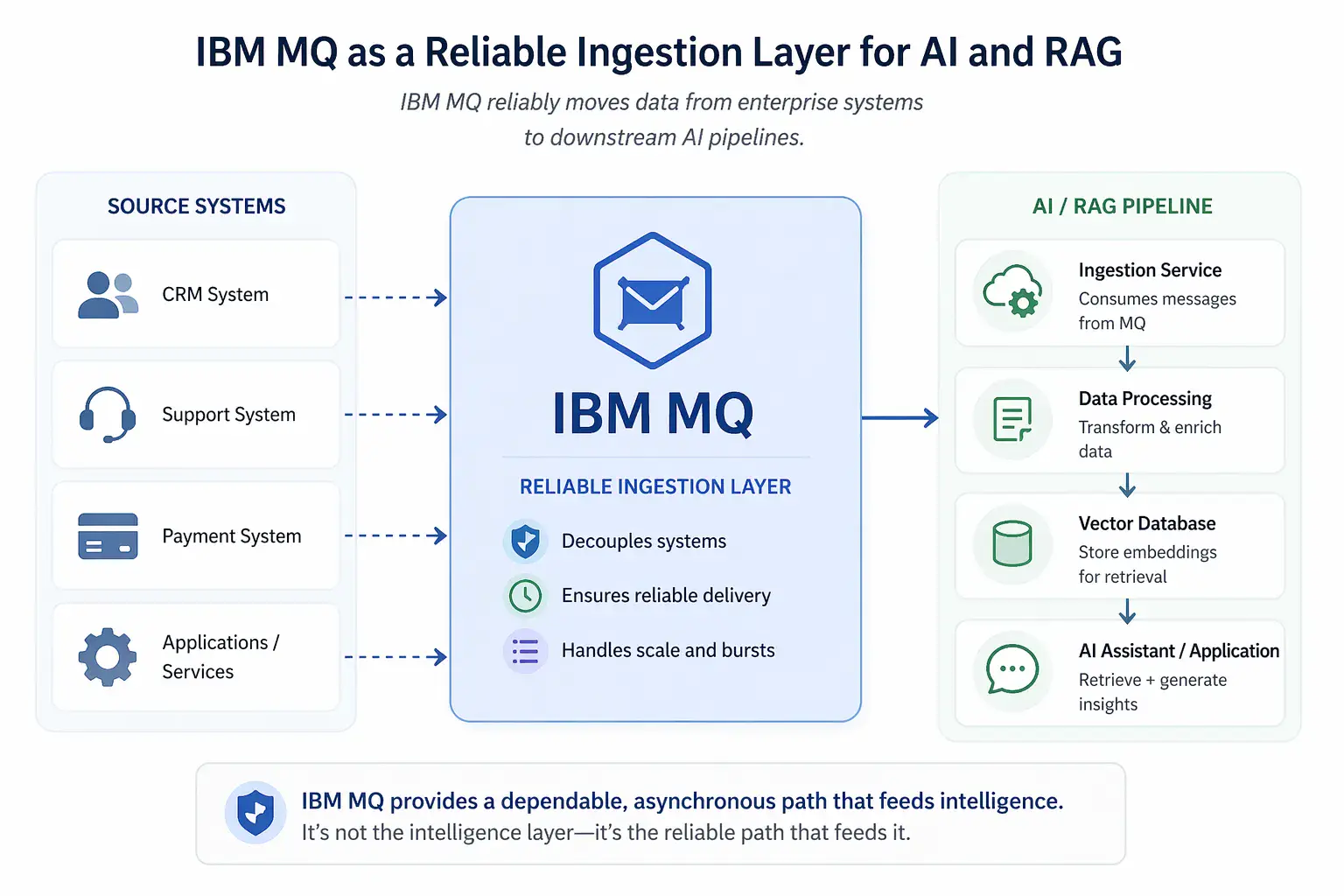

Using IBM MQ and RAG Together: A Reliable Ingestion Layer for AI

Enterprise AI projects rarely fail because the model is hard to use. They fail because the right data does not reliably reach the system that needs it.

That is where IBM MQ becomes relevant.

IBM MQ is not an AI tool. It is not a vector database or a retrieval engine. But it is already responsible for moving high-value business data across enterprise systems. That makes it a natural fit for feeding data into AI pipelines—especially those built on retrieval-augmented generation (RAG).

What IBM MQ does in a RAG architecture

In a RAG system, data must be:

- collected from source systems

- transformed into usable text

- enriched with metadata

- embedded into vectors

- stored for retrieval

IBM MQ plays a role at the very beginning of that pipeline.

Instead of forcing source systems to integrate directly with AI infrastructure, they can continue publishing messages to MQ. Downstream ingestion services then consume those messages and prepare them for AI use.

This preserves decoupling and avoids tight coupling between business systems and AI platforms.

Why this matters

Many enterprises already rely on MQ for:

- support ticket events

- transactional updates

- workflow triggers

- integration between systems

That means the data needed for AI already exists in motion.

Rather than rebuilding ingestion from scratch, MQ can serve as a stable, asynchronous ingestion backbone.

Why this changes operational expectations

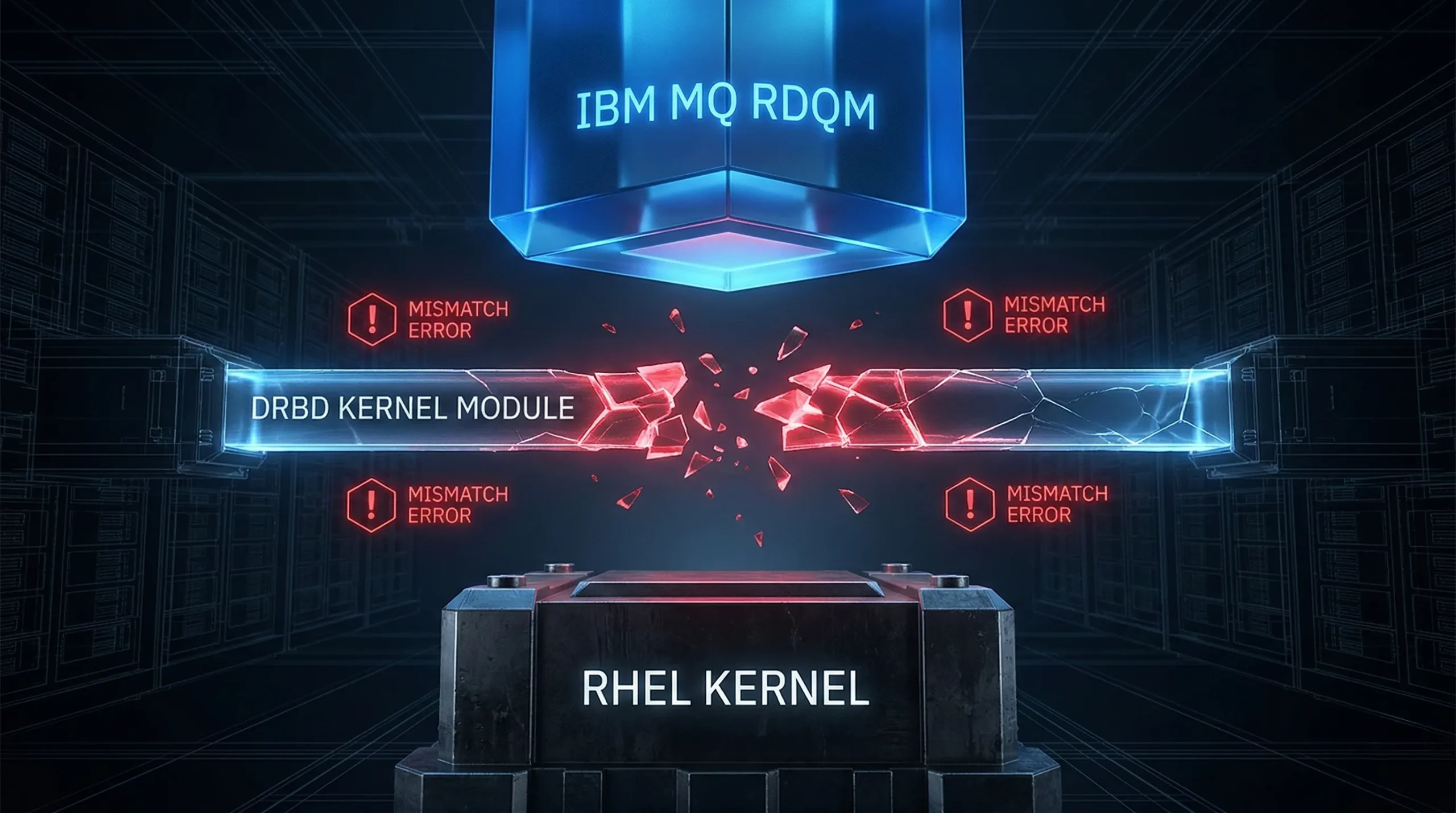

Using IBM MQ as an ingestion layer for AI and RAG does not reduce the need for operational visibility. It increases it.

In a traditional MQ environment, monitoring often focuses on whether messages are moving, queues are building up, channels are healthy, and queue managers are available. Those signals still matter. But when MQ feeds a downstream AI or RAG pipeline, the flow now depends on more than MQ alone.

Messages may move successfully through MQ but still encounter delays or failures later in the path, such as during ingestion, transformation, embedding, or indexing. That means teams need visibility not only into the MQ environment, but also into the broader ingestion path that determines whether data actually becomes usable by the AI system.

The goal is not just to confirm that messages were sent. The goal is to understand whether the pipeline is functioning as expected.

Key takeaways

- IBM MQ can serve as a reliable ingestion layer for AI and RAG pipelines without requiring source systems to integrate directly with AI infrastructure.

- MQ’s value in this role is not intelligence or retrieval, but dependable transport, decoupling, and delivery of business data.

- Once MQ feeds downstream AI pipelines, the architecture introduces new dependencies that may not be visible from MQ metrics alone.

- Visibility across the full ingestion path becomes critical to keeping the pipeline reliable in production.

This is Part 1 of a 6-part series exploring how to use IBM MQ as a reliable ingestion layer for AI and RAG.

For the full article, click here

More Infrared360® Resources