It still amazes me to this day how IT organizations, even up to the CIO, seem to enjoy pain.

Unlike the characters on Mad Men, my IT career was in the land where employees did not “mix business with pleasure”. Perhaps that’s the reason for the pain. Most of it is self-inflicted. For me, the entire point of being in technology is “innovation”. It’s to automate processes and functions so that they no longer need to be handled manually, or at least to take the complication out of the process and make it easier. Our motto became “Work Smarter….Not Harder”

We read articles about agile development. Then we read about how it can be quite misguided when used for ‘infrastructure’ projects, where stability and security are key. We read about how Dev/Ops is supposed to be the bridge to agility. We read how new technologies like containers, such as Docker and, Kubernetes, ease the deployment of applications. How virtual cloud infrastructures make sizing and deploying applications a breeze. There are new tools like Chef, Puppet, and Ansible, all created to make technology easier and less painful to control and deploy.

Alas, timeframes on getting technology out the door in good order, stable, and secure, have not improved much. The quality doesn’t seem to improve either. Now I’m not speaking from the office of upper management (though that’s where I now sit). I’m speaking from being part of it, interacting with, and being privy to rollouts in major corporations. I’m speaking from the feedback I receive from the technology people on the front lines. I’m speaking from hearing the timelines of the ‘actual’ project implementations vs. what was forecasted before the project began.

It’s not the plan that makes a project successful, it’s the planning.

Though we have all this technology at our fingertips, it comes at us fast and furious. It comes at us from someplace where it worked, for various reasons, for someone, at some time. The Amazon Cloud for instance, comes from their own need to handle IT sizing and speed to market as a result of their growth. That doesn’t mean that it’s a match for EVERY company. It really depends on the business model, on the current infrastructure of hardware, OS, software, tooling; on whether there are legacy applications to consider, etc. A recent visit with a client revealed that they were ‘moving to AWS’. I said, “but your applications all run on zOS and AIX.” After a brief conversation of whether those applications would be ported internally to Linux as a first step and the realization that was never in the plan, the response was “well, we told our executives that, but it didn’t really seem to change their plan.”

Patient: Dr. It hurts when I do this.

Dr: Well then don’t do that.

Many times, pain can be traced back to communication: I can’t believe they put that there! Why didn’t they move the #^$% thing out of the way? Why did someone leave that rake there for me to step on. Why didn’t anyone tell me about the bridge being closed for repairs? Now I’m going to be late!

It’s much the same with IT. Smart people who do challenging tasks need to communicate with clarity (that means providing detail). Corporations are reluctant to send people for training or industry tech shows these days; leaving everyone to learn these technologies on their own time and on their own dime. The operative word, ‘own’ = ‘lone’. Nothing against working from home, but getting a Skype text vs. the old fashioned face to face sit-down with TCM (tone, content, and movement) is not the same.

So, then where does a person learn best practices? Where do they learn what to avoid? What shortcuts to take (and when not to)? Who is the go-to GURU on these processes and technologies? Well, in the world of social media, it’s some blogger who left a note on GitHub or the ListServ or Linkedin Groups (shudder). Not exactly verifiable material. It’s like Wikipedia, it looks professional but it could be entirely incorrect.

Repeating mistakes:

Patient: Look at this, doctor.

Dr: Look at — oh! … Did you ever have that before?

Patient: Yes, I did.

Dr: Well, you got it again!

Too often technology decisions are made without being based on facts, clear definitions of requirements/objectives, research, pilots and/or thoughtful implementation….rather it is we have to embrace “insert flavor of the day technology”, and we’ll figure out why later. There never seems to be time to do things right, but there is always time to do things over (usually during late nights and weekends). If the technology or the timing is not right, another option just might be the smarter option.

What seems to happen is that folks try to shoehorn things into grander schemes that are handed down by upper management. It doesn’t mean they’re handed down without research. However, it does mean there must be some 2-way communication about the fit of the new plan. Sometimes it may fit better long term, and that’s fine. There needs to be an allowance of time and budget to do training; time for grappling with the new technologies and communication among experts to make sure we all agree we are solving the same problem and how we can verify that it’s working. That has to be among the first steps of the project timeline.

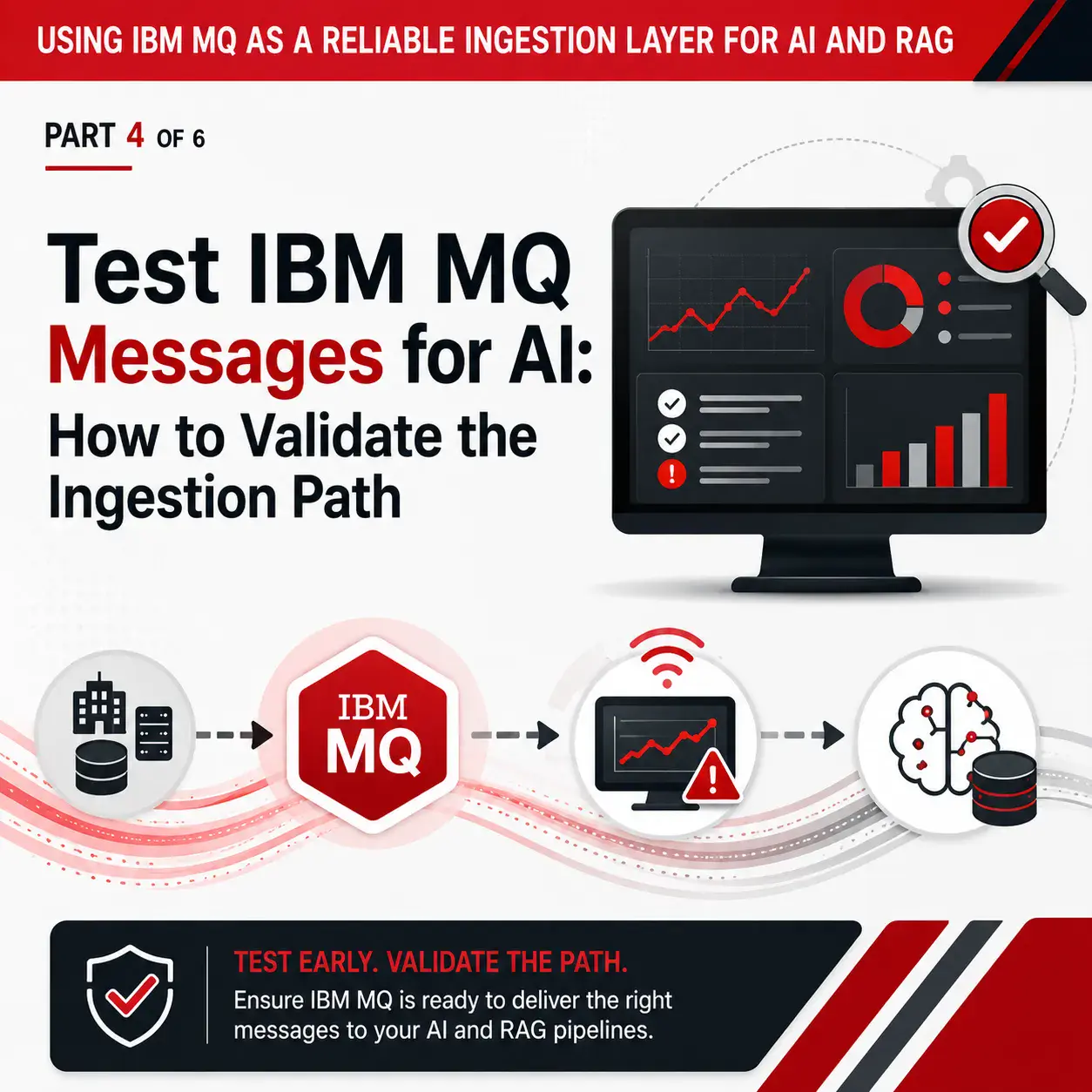

I’m constantly amazed at how many technologies are tested ‘in production’ or darn near close to it. For instance: Certificates. People are less likely to setup encryption in a testing or QA environment since there is less risk. However, that means that the first time you encrypt an app, or link, or container, it is when you move it to Production. Not the greatest place to practice. This also seems to go against the conventions of Dev/Ops since then entire container (app and parts) should work the same in ENV 1 as in ENV 2.

The result is PAIN. Either the pain of getting it wrong, or the pain of urgency, or both. Usually after an event like that, people want to recover. But that inevitably means they shelve it until the next time, when it becomes start from scratch again.